The 17 top SEO tools to build a modern, AI-ready tech stack

Search is changing fast, and slapping an 'AI' label on an outdated database doesn't make a tool worth its premium price tag. To find the right Top SEO Tools, look past the hype to see how platforms manage daily workflows. The most effective options combine keyword discovery, technical auditing, and content optimization into a connected system, breaking down isolated data silos. Industry standards like Semrush, Ahrefs, and Google Search Console provide foundational data, while specialized platforms fill critical gaps in localized rank tracking and deep site architecture analysis.

Right now, Productiv research shows the average company department juggles around 87 different SaaS products. Businesses actively maintain anywhere from 106 to over 300 applications in their total software stack based on that same Productiv data. The resulting fragmentation creates bloated workflows where disconnected subscriptions drain both budget and time, trapping strategists in administrative maintenance. You don't need thirty overlapping dashboards to rank a website. This guide evaluates the landscape to help you consolidate those subscriptions into a lean, purpose-built operation. Here are 17 in-depth platform evaluations categorized by modern SEO workflow stages.

Evaluation methodology and testing criteria

Adapting to personalized search metrics

According to Whatagraph's industry analysis, AI search results are highly personalized, making standard ranking metrics difficult to track without specific tools. When evaluating data quality, a platform's database must genuinely reflect how generative engines surface answers. Old search volume numbers from a static database fail to capture how large language models alter visibility.

Generative Engine Optimization requires a different analytical approach. Ten blue links no longer represent the reality of a modern search engine results page. Features like AI Overviews and dynamic local packs shift depending on the user's location and search history. Tools score higher when their databases update frequently enough to capture these volatile SERP features instead of pulling historical averages from previous years. A database showing ten thousand monthly searches means nothing if an AI summary completely monopolizes the top of the screen.

Escaping the restrictive credit limit trap

You review the agency's quarterly software expenses and realize you pay separate subscriptions for keyword research, content grading, and technical auditing. The tech stack becomes cost-prohibitive, and strict usage caps hit exactly when you run large-scale client audits. Platforms that offer generous crawl and export thresholds stand out over those that restrict high-frequency data exploration.

Predictable agency operations require transparent software pricing. When a technical SEO runs a site crawl, finds an error, implements a fix, and runs another crawl to verify it, the platform should support that iterative workflow. Tools that charge per click or per filter applied create friction. Platforms lose points in this evaluation if their baseline pricing models restrict natural data exploration.

Closing the click data reporting gap

Siloed reporting fractures strategy. Marketers spend only 18% of their time on creative thinking, losing the rest to manual reporting and optimization tasks according to PHD and WARC data. Another study by Datorama indicates marketers lose at least 3.55 hours per week strictly to manual data management. Without native integration with actual click data, reporting remains an educated guessing game.

Direct performance metrics close that gap. With platforms like RankDots, you pull real Clicks, Impressions, CTR, and Rank directly through a Google Search Console integration. This gives you a verified baseline globally and by specific countries. This exact type of direct API connection is a core requirement in our evaluation. When a tool pairs its own proprietary crawl data with your actual click performance, you can communicate clear ROI to clients instead of explaining why two different dashboards show conflicting traffic numbers. You base optimizations on verified interactions, avoiding theoretical search volumes.

Top SEO Tools Feature Comparison

| Platform | Primary Workflow | Standout Capability | Base Price |

|---|---|---|---|

| Semrush | Baseline Discovery | 25.3 billion keyword database | Starts at $139.95/month |

| Ahrefs | Competitive Auditing | Frequent backlink data updates | Starts at $29/month |

| Screaming Frog | Technical Architecture | Custom HTML extraction via regex | £199/year |

| Google Search Console | Verified Analytics | Actual impression and click data | Free |

| Surfer SEO | Semantic Drafting | Real-time competitor content scoring | Starts at $99/month |

| Clearscope | Enterprise Content | Assigns real-time SERP grades | Starts at $170/month |

| MarketMuse | Topical Mapping | Measures domain topical authority | Starts at $149/month |

| Nightwatch | Visibility Tracking | Tracks LLM and local visibility | Starts at $32/month |

| SE Ranking | Daily Monitoring | Tracks 2,000 daily keywords | Starts at $129/month |

| Moz | Accessible Reporting | Prompt tracking for GPT | Starts at $99/month |

| Majestic | Off-Page Forensics | Trust Flow and Citation Flow | Starts at $49.99/month |

| Mangools | Intent Verification | Local SERP analysis | Starts at $29/month |

| Ubersuggest | Low-Cost Discovery | Basic search volume and CPC | Starts at $29/month |

| Frase | Brief Generation | Scrapes SERPs for content briefs | Starts at $14.99/month |

| All in One SEO | Native Publishing | Automates smart XML sitemaps | Starts at $49.60/year |

| BrightEdge | Enterprise Intelligence | Data Cube X global tracking | Quote-based pricing |

| ChatGPT | Data Processing | Generates reasoning using GPT-5 | Starts at $20/month |

Categorized tool awards

Preventing software stack bloat

Companies lose between 20% and 30% of their annual revenue due to inefficiencies caused by incorrect or siloed data based on IDC research. Gartner similarly estimates that the average organization loses $12.9 million every year directly due to poor data quality resulting from operational silos. A categorized tech stack prevents overspending on overlapping secondary features.

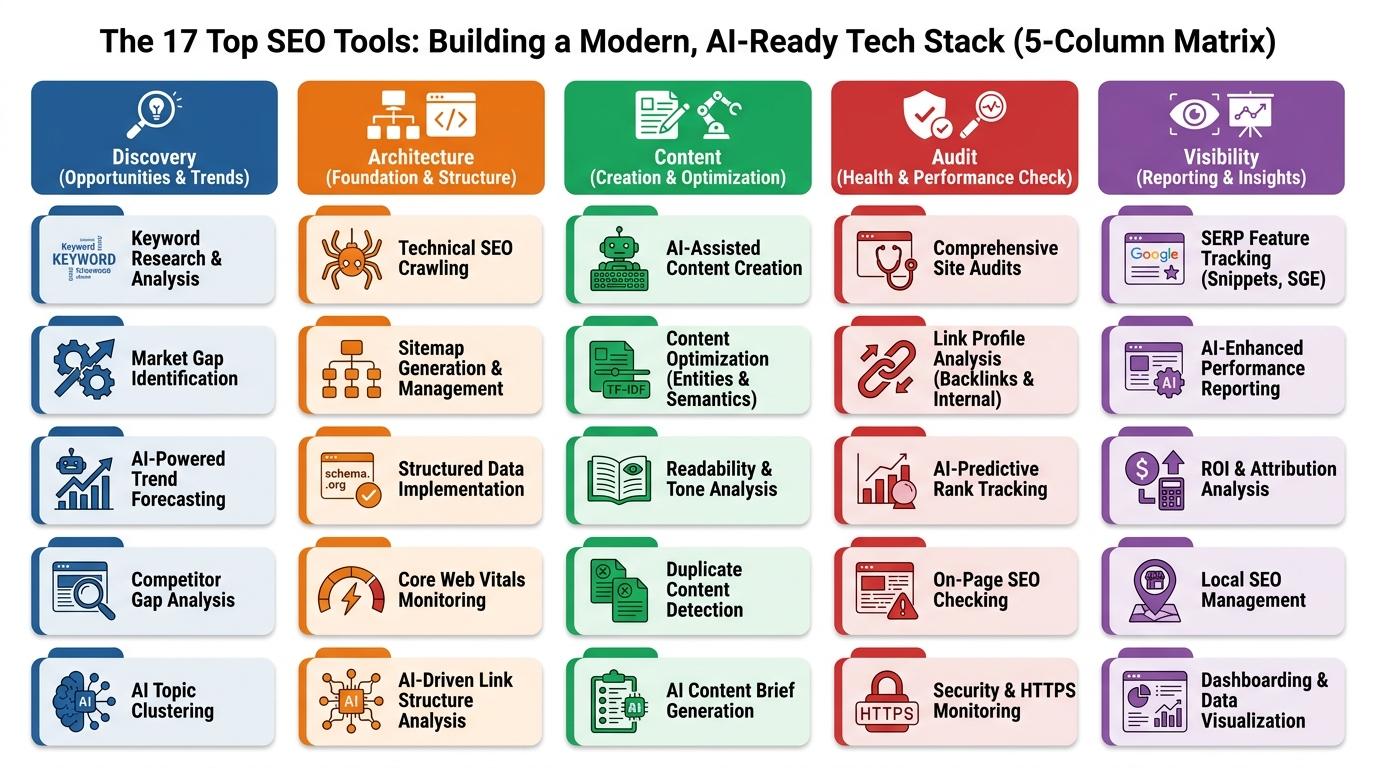

A tight workflow requires strictly classifying platforms into Discovery, Architecture, Content, Audit, and Visibility. This prevents buying the same redundant backlink checker three times. Most modern platforms try to do everything, resulting in a master-of-none scenario where you pay premium prices for secondary features you never use. Focusing on specific category winners ensures you pay for depth over breadth, allowing you to connect specialized systems into a highly efficient daily operation.

Solving the site architecture bottleneck

You download thousands of raw keywords into a spreadsheet to manually map out a new pillar-and-cluster architecture for a client. Manually sorting search intent and grouping topics takes hours, and legacy suites rarely automate the clustering required to scale this process efficiently. This operational bottleneck is exactly why tools with dedicated Architecture and Discovery features score higher in our evaluations.

Spreadsheets fail at scale. When you deal with large sites, manual mapping leads to keyword cannibalization and missed internal linking opportunities. Platforms that automatically cluster keywords by SERP overlap take a process that usually consumes an entire afternoon and compress it into minutes. With advanced systems, you automate this grouping into a structured hierarchy while adjusting the SERP overlap threshold to control the sensitivity. This programmatic approach prevents duplicate targeting before writers even draft their first outline.

The five essential workflow categories

The following recommendations fit neatly into specific stages of the daily campaign execution cycle.

With Discovery tools, you handle raw term collection, find the initial seed topics, and analyze search volume. Architecture tools let you structure those raw terms into logical topical maps and hierarchies. Using Content tools, you grade drafts against top-ranking competitors to ensure high semantic relevance. With Audit tools, you monitor the technical health of the site, tracking indexation and crawl errors. Visibility tools let you track exact ranking fluctuations across local and global LLM-driven search results.

Semrush

Massive keyword database scale

Semrush relies on its database of 25.3 billion keywords. That sheer scale drives comprehensive search volume analysis across almost any niche, including obscure B2B industries where other tools show zero search volume. That much historical and current search data allows strategists to spot emerging trends before competitors react.

The platform also handles technical site health effectively right out of the gate. It audits technical site health for up to 100,000 pages per month. The crawler identifies broken links, redirect chains, and Core Web Vitals issues with a high degree of accuracy. The sheer scale of the database combined with broad tracking and deep auditing covers the essential baseline.

Entry-level tracking limitations

The entry-level plan imposes a hard cap, tracking up to 500 keywords. That threshold disappears quickly when managing multiple client campaigns or building out extensive topic clusters. Agencies scaling their tracking operations often hit this ceiling within the first few weeks of deployment.

A large e-commerce site or a comprehensive content publisher requires monitoring thousands of variations. When you are artificially limited to a few hundred core terms, you lose visibility into the long-tail queries that actually drive conversions. The next pricing tier increases monthly costs significantly, which requires careful budget planning.

Ideal user profile and final verdict

Semrush fits full-service agencies that require a centralized suite for client reporting. The interface aggregates paid search, organic visibility, and social media metrics into one presentable dashboard, making it an excellent client-facing tool.

Choose this for broad baseline visibility, but expect to pair it with specialized tools for granular execution. The entry-level costs provide extensive research capabilities, but enterprise realities require higher tiers the moment daily tracking volume scales up. It's generally the safest anchor for a new agency tech stack.

Ahrefs

Deep historical backlink tracking

Ahrefs analyzes backlink profiles with frequent data updates, giving link builders a highly accurate picture of off-page authority. The crawler maps out exactly when a competitor acquired a specific link and when they lost it. This granular historical view allows digital PR teams to reverse-engineer successful link-building campaigns with precision.

On the technical side, the platform performs technical site audits up to 500,000 URLs per month on the Lite plan. The auditing tool handles JavaScript rendering well and provides actionable explanations for complex indexing issues. It digs deep into the architecture of enterprise-level domains without requiring advanced configuration.

Strict credit-based usage limits

The platform operates on strict credit-based usage limits. The pricing model heavily restricts high-frequency data exports and iterative research workflows. A keyword filter consumes a credit. A new folder consumes a credit. A backlink profile sort consumes a credit.

The restrictive credit model fundamentally changes how an agency interacts with the tool. Instead of exploring data freely to find hidden opportunities, analysts must hoard credits and carefully plan exactly which reports they want to pull. Teams often hit a frustrating credit wall mid-project, forcing them to either halt work or purchase expensive top-ups just to finish a standard monthly audit.

Ideal user profile and final verdict

Link builders and technical SEOs focused heavily on off-page authority rely on this data. The sheer speed of the backlink crawler makes it the industry standard for competitive gap analysis.

The link data is incredibly deep, but strict export caps make it risky to use as a daily reporting dashboard despite its value for competitive gap analysis. You have to plan your data pulls carefully to avoid surprise overages. Keep a single shared license for heavy technical auditing and link research, while handling daily keyword tracking elsewhere.

Screaming Frog

Deep extraction and custom scraping

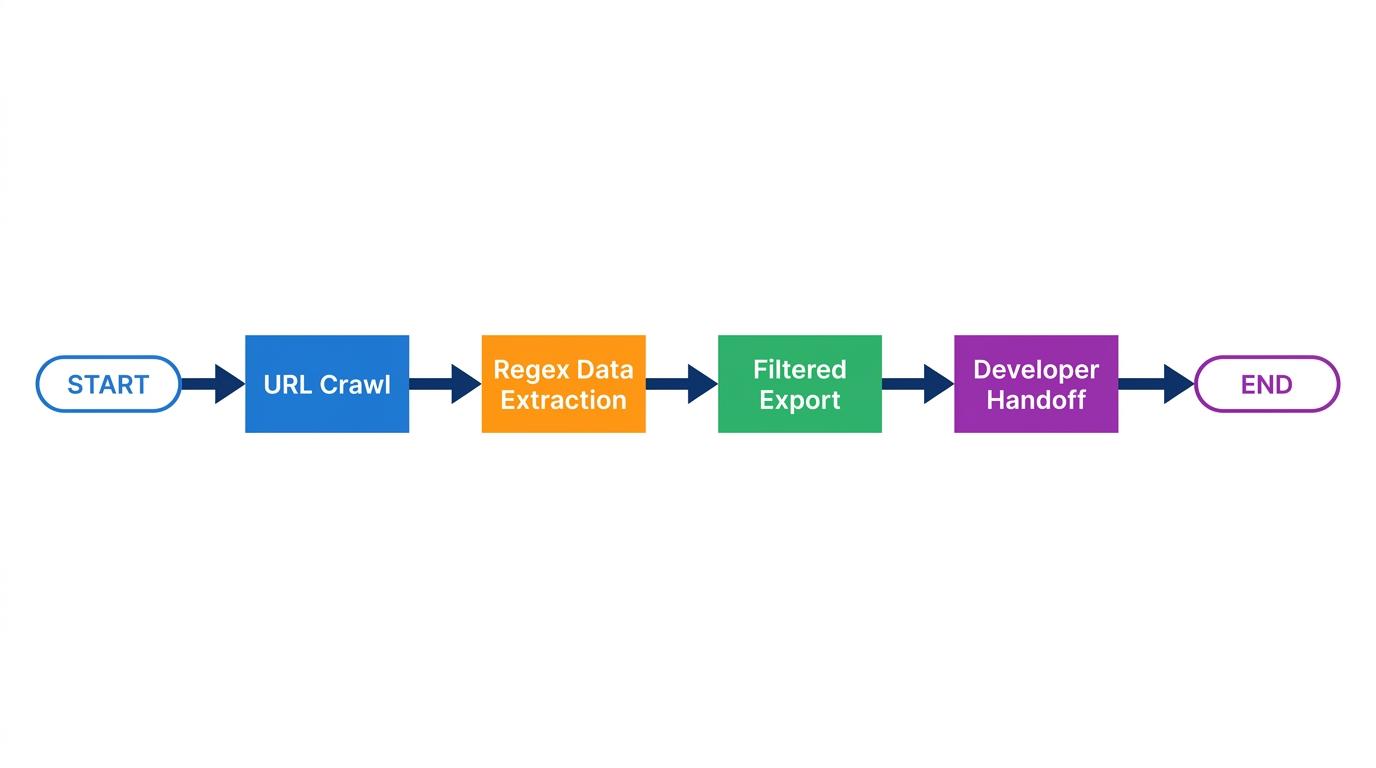

Cloud-based crawlers abstract technical data into colorful dashboards. Screaming Frog gives you raw data exactly as the search engine sees it. The desktop application provides an unfiltered window into site architecture, analyzing server responses, directive tags, and internal linking structures without the guardrails of an all-in-one suite.

The real power lies in data extraction. The crawler extracts custom HTML data using XPath or regex, pulling highly specific information across thousands of pages simultaneously. You can configure it to scrape author names, publication dates, out-of-stock product tags, or specific schema markup elements. When executing a large e-commerce migration, this granular extraction ensures no critical metadata gets lost in the transition.

Hardware constraints and localized precision

Consider a team preparing to launch a highly localized campaign for a multi-location franchise. They need to audit crawl budgets and ensure zip-code level ranking accuracy across hundreds of regional landing pages. A generalized web-based platform often lacks the unhindered crawl depth required for this specific enterprise task. Screaming Frog handles it effortlessly, provided the machine running it has the processing power.

The software operates locally. That means the size of your audit is strictly bound by your computer's RAM. A two-million-page enterprise domain scan requires dedicated hardware or a cloud server instance. This creates a barrier for users accustomed to clicking a button and letting a remote server handle the processing.

The entry point is accessible, as the free version is restricted to 500 URLs. That threshold works for small local business sites or quick spot-checks on a specific directory. Large-scale structural analysis requires the paid tier.

The technical strategist's verdict

The core appeal comes down to raw extraction and unlimited depth without any cloud abstractions. Technical specialists rely on this daily because it refuses to simplify complex site issues.

The annual license represents a high cost-to-value ratio compared to credit-heavy cloud alternatives. It demands a learning curve, and the interface looks like a massive spreadsheet, but it remains the absolute standard for diagnosing architectural bottlenecks before they impact indexing.

Google Search Console

The absolute baseline for organic truth

Every third-party tool estimates search volume. Google Search Console displays actual impression and click data from Google Search. That distinction separates theoretical research from verified performance. When a new core update rolls out, this dashboard is the only place to confirm exactly which pages gained or lost visibility straight from the index.

Technical diagnostics also start here. The platform highlights mobile usability errors, Core Web Vitals performance, and manual actions. If a page drops out of the index, the URL inspection tool provides the exact server response and crawling status, removing the guesswork from technical troubleshooting.

Historical caps and missing context

A content director attempts to pull a year-over-year performance report for a specific cluster directly from the native interface. They immediately hit a frustrating wall because historical data is capped at 16 months in the interface. That restriction makes long-term trend analysis impossible without a dedicated external data warehouse.

The isolation of the data presents another major hurdle. The platform lacks competitor data and broad keyword search volumes entirely. It shows exactly how your site performs but provides zero context regarding the overall market size or what rival domains are doing. A page might show declining clicks, but without competitor analysis, you can't tell if you're losing rankings or if the broader search intent has simply shifted away from that topic.

Building a complete reporting pipeline

This is a fundamental prerequisite, yet functionally incomplete without third-party integration. You can't run a modern SEO campaign without it, nor can you run a campaign using it alone.

Professionals must export this data to unlock its actual utility. Connecting the platform's API to your broader tech stack bridges the gap between internal performance and external market conditions. For example, syncing GSC click data directly into a visibility tracker like RankDots gives you a verified baseline for your daily ranking fluctuations, allowing you to communicate clear ROI to clients instead of explaining why third-party traffic estimates look wrong.

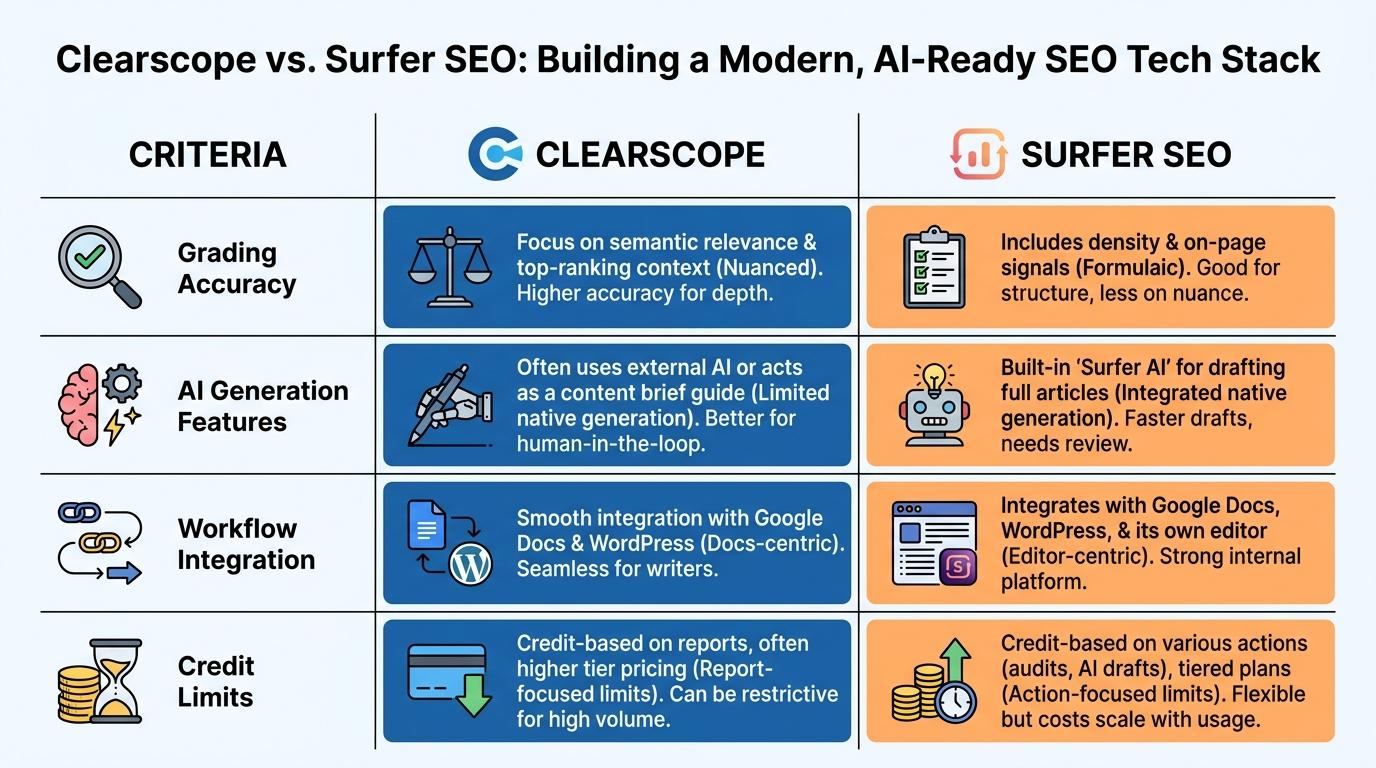

Surfer SEO

Semantic scoring against live competitors

You must move beyond basic keyword stuffing to write optimized content. Surfer SEO provides a Content Editor with a real-time Content Score based on competitor analysis. Surfer SEO analyzes the top-ranking pages for your target query and calculates the exact semantic density required to compete. It maps out exactly which related entities need to appear in your draft.

Writers get a mathematical breakdown of optimal word counts, heading structures, and image frequency, removing the guesswork from article depth. It integrates an AI writing assistant named Surfy for in-editor support. This helps teams quickly generate outlines or flesh out specific paragraphs based on the tool's semantic recommendations.

The cost of AI-driven drafting

Financial friction scales rapidly with output. The platform carries a high ongoing cost due to an AI credit system. Every new content brief generated or extensive AI draft created burns through your monthly allocation. Agencies publishing dozens of articles a week often find themselves constantly upgrading tiers to keep the editor functioning.

Surfer SEO is also entirely isolated from the broader technical ecosystem. It's disconnected from technical SEO and off-page insights. You can write a mathematically perfect article, but if the site suffers from severe crawl bloat or lacks topical authority, the high content score won't force the page to rank.

Narrow focus and final verdict

The entire workflow centers around achieving semantic density through real-time grading and instant SERP alignment.

Content teams prioritizing precise on-page optimization get massive value from the editor. It trains junior writers to think about topical coverage rather than just primary keywords. This is an excellent choice for isolated page optimization, provided your agency budget can comfortably support the aggressive AI credit burn rate required for large-scale publishing operations.

Clearscope

High-fidelity grading directly in the workflow

Enterprise content teams require standardized quality control before a draft ever reaches the CMS. Clearscope assigns a content grade (A++ to F) based on real-time SERP comparison. It analyzes top-ranking results, extracts the most critical semantic entities, and grades the document dynamically as the writer works.

The workflow integration sets it apart. The grading engine lives directly inside major word processors like Google Docs and WordPress, keeping writers in their native environment. This keeps content creators in their native environments while enforcing strict semantic requirements.

Strict focus with zero external context

Precision comes at a steep premium. Clearscope carries prohibitive pricing for low-volume publishers. Small agencies or independent consultants testing the waters of semantic SEO will find the entry-level costs difficult to justify compared to cheaper, broader tool suites.

It maintains an absolute focus on content fidelity. It lacks off-page and technical SEO features entirely. You can't track rankings, audit backlinks, or check site speed. It does one specific job with extreme accuracy and ignores the rest of the search landscape entirely.

The premium standard for enterprise content

The pattern is clear across the space: broader tools compromise on grading accuracy, while specialized tools demand higher budgets.

Clearscope is the premium standard for content optimization. Enterprise content departments demanding high-fidelity briefs and tight writer integrations rely on it to scale their publishing without sacrificing topical depth. Deploy it strictly when your production volume is high enough that standardizing editorial quality becomes a larger financial priority than the software license itself.

MarketMuse

Domain-wide topical mapping

A single article is straightforward to evaluate, but auditing thousands of pages to find structural gaps requires heavy computational lifting. MarketMuse maps site content against topic clusters to measure domain authority. It ingests your entire website architecture and calculates exactly where your topical coverage is strong and where competitors hold an advantage.

This inventory analysis identifies low-hanging fruit at scale. MarketMuse highlights pages with high topical authority but low organic traffic, signaling a need for technical or off-page intervention. It automates the deep content auditing that typically requires weeks of spreadsheet manipulation.

Interface friction and processing speeds

Deep analysis demands patience. Because the platform processes entire domain architectures and runs complex semantic models, it suffers from a complex interface and slow processing times. Running a comprehensive inventory audit takes time, and the resulting dashboards present a steep learning curve for junior analysts.

The barrier to entry is severe. It carries an extremely high pricing barrier that prices out mid-market agencies and independent publishers. This tool requires a dedicated enterprise budget and a strategist who fully understands how to translate dense topical modeling into actionable content briefs.

The strategist's final verdict

The enterprise tag makes sense when you need automated clustering, inventory scoring, and large-scale domain analysis.

The platform is over-engineered for basic keyword research. If you just need search volumes for a blog post, this is the wrong system. It works best for senior strategists managing sprawling content silos across large enterprise domains. When you need to reorganize thousands of pages into a logical, authoritative structure, the depth of its mapping capabilities is unmatched in the industry.

Frase

Synthesizing SERP data into actionable briefs

Manual outline creation wastes hours of valuable research time. Frase speeds up that initial discovery phase. It generates content briefs after analyzing top SERP results. It scrapes the current ranking pages for your target query, extracts the core heading structures, and compiles a comprehensive framework for writers to follow. Freelance writers and lean marketing teams use this exact workflow to scale their production output without spending the entire morning reading competitor articles one by one.

The missing data layer

An efficient draft structure doesn't replace the need for foundational search metrics. Frase lacks comprehensive native SEO research metrics without paid add-ons. If you want to check standard search volumes, evaluate keyword difficulty, or review backlink profiles, the base subscription forces you to look elsewhere.

It also misses traditional rank tracking and site audit features entirely. You can build a mathematically optimized article within the editor, but you can't monitor how those optimized briefs actually perform in the search engine results pages once published.

Production focus and final verdict

A pure content generator leaves glaring blind spots in a broader strategy. You have to pair it with a traditional research platform to verify search demand before writing a single word.

Fast drafting comes at the cost of missing fundamentals and forced upgrades for basic data. This fits strictly for teams focused purely on output velocity. The accessible solo plans make it an easy entry point for independent writers, but agency strategists will immediately feel the absence of deep architectural and tracking capabilities.

Nightwatch

Hyper-local tracking and AI visibility

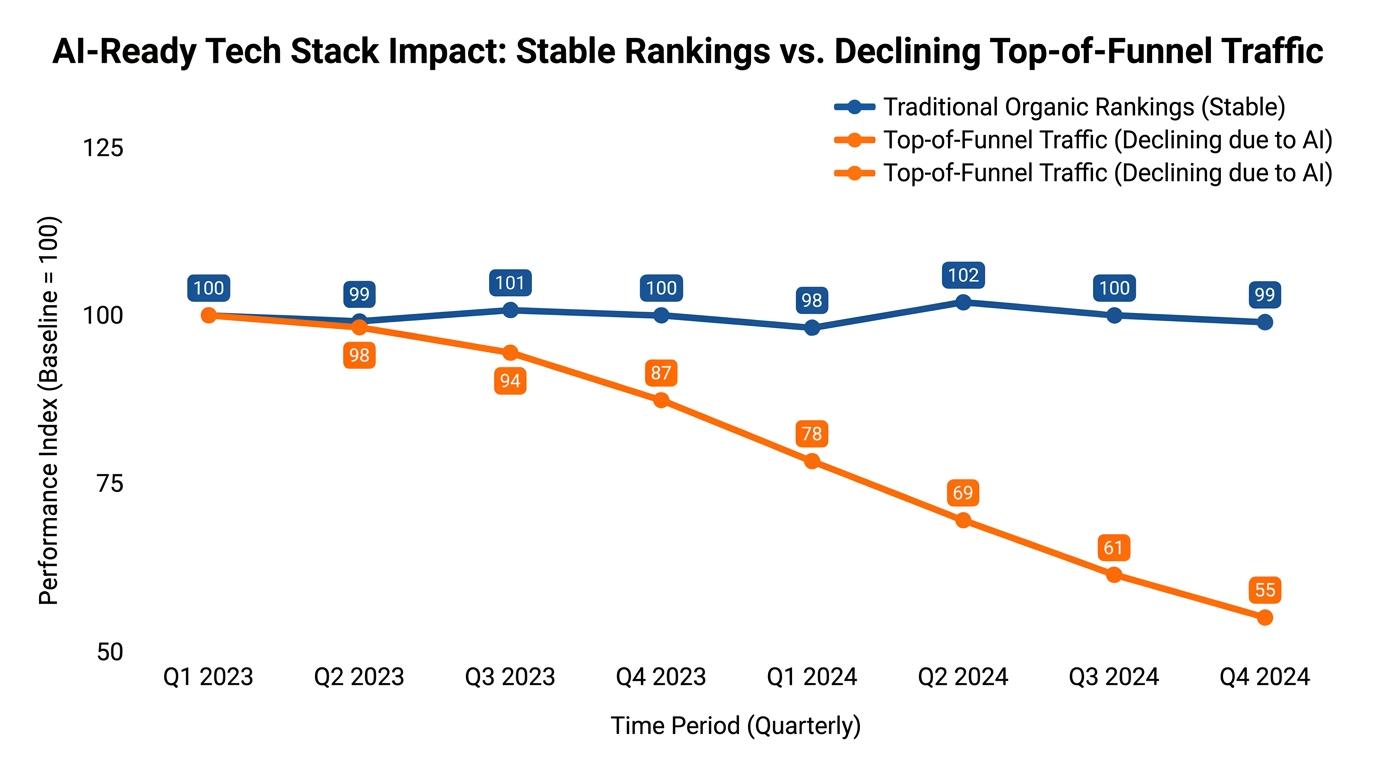

You watch traffic to top-of-funnel informational pages plummet over a three-month period, even though standard trackers show those pages holding the number one spot. The gap between those two realities usually points to generative engines stealing clicks directly in the interface. Nightwatch addresses that exact tracking failure because it tracks brand visibility within AI language models (LLMs) such as ChatGPT and Claude.

Nightwatch also excels at regional precision. It provides location-specific tracking from 100,000+ locations worldwide, delivering hyper-accurate local keyword rank tracking down to the zip-code level. Multi-location franchises use this granularity to see exactly how their service pages perform in specific neighborhoods rather than settling for a generalized national average.

The missing off-page context

Visibility tracking represents only one piece of the organic puzzle. It lacks the deep link building components expected from heavy suite tools. You can't run a competitive backlink gap analysis or audit a prospect's domain authority within the interface.

The verdict for specialized reporting

Generic suite trackers often smooth out the volatility of local map packs and dynamic LLM responses. Nightwatch is a strong choice for localized performance reporting precisely because it captures those granular fluctuations. Agencies get a definitive picture of exactly where traffic originates when they connect its precise location data with a verified click tracker like RankDots.

Cost tiers scale based directly on keyword volume. That makes the pricing predictable for agencies aggressively tracking generative engine performance across hundreds of distinct local markets.

SE Ranking

Bridging daily monitoring and AI tracking

Mid-sized agencies frequently overpay for legacy enterprise platforms just to access reliable, frequent data updates. SE Ranking offers a compelling alternative by providing solid daily keyword tracking merged with new brand visibility monitoring. The software tracks 2,000 daily keywords and provides unlimited site audits on the Core plan, setting a high baseline for routine campaign management without immediate paywalls.

The platform also monitors brand visibility across 6 AI search platforms. That integration helps technical teams bridge the gap between classic ten-blue-link tracking and the modern reality of LLM-driven answers, ensuring visibility reports reflect where users actually find information today.

Volume restrictions at scale

The value proposition shifts dramatically when campaign sizes expand. The pricing model heavily restricts high-volume keyword tracking. Cost scales sharply for agencies demanding high-frequency, large-volume tracking across thousands of enterprise product pages.

More terms push the monthly subscription into the exact same premium territory as the legacy suites the agency originally tried to escape. You must forecast your budget carefully to avoid sudden invoice spikes when managing vast e-commerce architectures on this platform.

The mid-market compromise

The platform offers solid daily data and broad AI visibility, but carries steep scaling costs.

This is often the smartest mid-tier compromise for teams tired of overpaying for unused legacy features. The core plan pricing dynamics work perfectly for agencies managing a handful of local or regional clients, provided they keep strict control over their active tracking lists and regularly purge inactive keywords.

Moz

Accessible AI visibility dashboards

Complex technical interfaces intimidate stakeholders who just want to understand their high-level search presence. Moz prioritizes clean AI visibility dashboards tracking prompt performance in modern generative models. The software offers an AI visibility dashboard and prompt tracking for GPT and Gemini, translating dense LLM query data into readable, presentation-ready charts.

In-house marketers focused on high-level strategy over granular data manipulation gravitate toward this approach. The Standard Pro plan tracks up to 300 keywords and crawls 400,000 pages per month, covering the essential baseline requirements of most small to mid-sized business websites without overwhelming the user.

Database size and metric freshness

A clean interface sometimes comes at the expense of raw data depth. The platform relies on a smaller keyword database and less frequent SERP updates compared to premium alternatives.

Strategists running aggressive, fast-paced campaigns often notice a lag between actual search volume shifts and the numbers reported in the tool. When you need real-time competitive intelligence during a volatile core update, that delay becomes a serious operational bottleneck.

Strategic focus over technical depth

The platform prioritizes safe navigation and clean reporting over raw speed. It's highly user-friendly, but aggressive technical teams eventually outgrow it.

Standard Pro plan costs remain reasonable for what it offers, making it a reliable starting point for internal marketing departments. Agencies typically transition away from it once their developers demand real-time crawl data or their content teams hit the database limitations for obscure long-tail queries.

Majestic

Forensic analysis of off-page authority

To evaluate a domain's off-page health, you must look past sheer volume to understand the actual quality of incoming links. Majestic calculates proprietary Trust Flow and Citation Flow metrics to separate manipulative spam from genuine authority. Dedicated link-building agencies conducting massive domain appraisals rely on these numbers to qualify outreach targets quickly and effectively.

The platform provides historical backlink tracking and link acquisition charts. These map exactly how a site earned its authority over time. This historical perspective proves essential when diagnosing algorithmic penalties or identifying toxic link spikes that threaten a client's organic standing.

The absolute exclusion of on-page data

Extreme specialization creates massive operational blind spots. The software lacks broader SEO features outside of link analysis.

You'll find a lack of traditional search volume metrics, keyword difficulty scores, and on-page optimization tools. You can't use this system to plan a content calendar or audit a site's technical architecture. It ignores the actual website entirely to focus exclusively on what points to it.

The link specialist's verdict

Deep link history, proprietary trust scores, and no content utility define the tool perfectly.

It remains an essential forensic tool for backlink specialists that offers zero utility for content creators. The Lite plan entry constraints limit historical data access significantly, so serious link builders typically need the higher tiers to conduct the full domain appraisals their campaigns demand.

Mangools

Most keyword tools overwhelm you with dozens of filtered columns before you even see a basic difficulty score. Mangools takes the opposite approach. It prioritizes immediate visual clarity over sheer data density. It relies on a substantial database of 2.5 billion keywords and over 30 million SERPs, presenting core search volume alongside an immediate local ranking snapshot. You see exactly what currently ranks in a specific city without digging through secondary configuration menus.

Local search campaigns require precise intent verification. When evaluating a query like "emergency roof repair," a national average search volume tells you very little about the actual competition in a specific zip code. The platform allows you to check the live SERP for that exact location, showing you whether the results favor localized directories or individual service businesses. Rapid verification prevents you from targeting terms where the search engine has already decided to heavily favor map packs over traditional blue links.

The trade-off for simplicity

That streamlined interface comes at a strict functional cost. The platform lacks the heavy processing power required for enterprise-scale technical site audits. It also omits the native content production and semantic grading features found in heavier software suites. You can't run a deep architectural crawl of a massive e-commerce domain or generate dynamic AI content briefs directly within this interface.

If your daily workflow involves managing hundreds of server redirects or diagnosing complex JavaScript rendering issues, you'll hit a wall immediately. The included crawler handles basic health checks but stops short of the granular extraction technical specialists demand for large migrations.

The strategic verdict for solo operators

Solopreneurs and small businesses usually prioritize fast, readable insights over large data warehouses they barely use. The software fits perfectly if you need rapid intent checks and ranking snapshots, provided you don't require deep crawling capabilities.

The platform delivers quick checks and clean design, but completely ignores deep crawling.

The entry-level annual billing works well for independent operators who need a reliable pulse on their primary keyword targets but prefer handling their technical diagnostics using specialized desktop crawlers.

Ubersuggest

Baseline search metrics shouldn't require a master's degree in data manipulation. Ubersuggest delivers exactly what early-stage marketers need to get moving: a straightforward presentation of search volume, CPC metrics, and basic SEO difficulty scores. It aggregates these core data points into an accessible dashboard that answers immediate questions about keyword viability without overwhelming a new user.

Content creators mapping out their first editorial calendar use this CPC data to estimate potential commercial value before drafting a single word. If a query shows high volume but a CPC of zero, the traffic likely carries purely informational intent. The interface highlights these basic relationships clearly. Beginners can easily filter out vanity metrics and focus on terms that might actually generate revenue.

Constraints on data manipulation

Rapid scaling quickly exposes the platform's structural limitations. The software heavily restricts data export functionality, making it difficult to pull raw numbers into external spreadsheets for complex topical mapping or client reporting. You have to work within their walled garden, creating significant friction when you need to merge keyword data with external click metrics.

The system also operates with a noticeably smaller backlink index compared to legacy enterprise competitors. If you need to map out an aggressive digital PR campaign or reverse-engineer a competitor's decade-old link profile, you'll find glaring gaps in the reporting. The crawler simply doesn't catch the obscure tier-two links that specialized link-building tools index daily.

The low-cost discovery verdict

Early-stage bloggers and in-house teams operating with minimal software budgets often start their journey here. The generous free tier provides enough daily data for sporadic research, while the paid plans remain exceptionally affordable compared to the wider market.

You get affordable discovery and basic metrics, but the data extraction remains strictly limited.

It is a valuable discovery engine for those starting out, but proves entirely unreliable for competitive enterprise analysis. Once you need to extract thousands of rows of data or audit extensive link profiles, you'll need to migrate to a heavier suite.

All in One SEO (AIOSEO)

Content teams often write in Google Docs, check semantic scores in a third-party application, and then paste the final text into their CMS. All in One SEO (AIOSEO) eliminates that workflow friction entirely. It brings TruSEO on-page analysis directly into the editor. You get real-time optimization checks as you type. Writers see exactly how their draft stacks up against basic structural guidelines before they ever hit the publish button.

Automating technical indexing

The plugin handles backend indexing with minimal manual input. It automatically generates smart XML and video sitemaps. Search engines crawl new multimedia content the moment it goes live. News publishers and video-heavy affiliate sites benefit massively from this automation. When you embed a product review video into a post, the platform instantly updates the video sitemap, pushing that new asset to the search engine without requiring a developer to adjust the server configuration.

The scaling paywall

A single high-traffic domain works flawlessly, but scaling across a portfolio introduces immediate financial friction. Multi-site management and advanced integrations require jumping to the expensive pricing tiers. Agencies managing dozens of independent client sites will find that the base license offers limited utility for their broader operations.

You must purchase premium licenses to unlock the features required for bulk administration and advanced WooCommerce support. The plugin becomes quite costly for an agency trying to standardize its WordPress tech stack across fifty different client retainers.

The WordPress publisher's verdict

WordPress-native publishers handling complex on-site technical architecture need tools that sit directly in their daily workflow. This is the strongest native plugin alternative to Yoast for managing site structure directly within the CMS environment.

If you manage a single heavy-traffic blog, the standard cost makes complete sense. Agencies must calculate whether the multi-site scaling costs justify the convenience of native integration compared to handling technical workflows externally.

BrightEdge

Global enterprise brands managing millions of pages break standard SaaS trackers instantly. BrightEdge handles that immense scale through its Data Cube X engine, which provides enterprise-scale competitive intelligence and real-time tracking across massive keyword sets. When you need to monitor organic visibility across dozens of global markets and map exactly how generative search impacts a multi-national product catalog, this system possesses the processing depth to handle the load.

Standard tools crash when you ask them to filter two million keywords by specific zip codes. The enterprise crawler ingests that data and translates it into high-level forecasting models. Corporate marketing directors can then predict revenue shifts based on specific algorithmic updates.

Operational friction and opacity

Processing power at this scale demands a serious operational commitment from your team. The platform presents a notoriously steep learning curve and a highly complex interface. A junior analyst cannot simply log in and intuitively build a weekly report; they require dedicated training just to navigate the core dashboards.

Procurement teams also face significant hurdles during the buying process. The company relies on opaque, expensive quote-based pricing. The procurement process involves heavy contract negotiations, strict seat restrictions, and long-term commitments rather than a transparent monthly credit card swipe. You pay a premium for the dedicated enterprise account management.

The enterprise deployment verdict

BrightEdge targets a very specific buyer profile, ignoring the small agency market entirely. Large corporate departments with dedicated data analysts will extract massive value from its forecasting models and global tracking capabilities.

Extensive processing scale meets a steep learning curve and opaque contracts.

It becomes disastrously expensive if adopted by under-resourced departments that lack the specialized staff to configure its dashboards. Deploy it strictly when you have the internal headcount and the corporate budget to turn its raw competitive intelligence into active strategy.

ChatGPT

Raw data exports from technical crawlers often overwhelm clients and junior staff who lack advanced spreadsheet skills. ChatGPT completely changes how you process those dense files. Powered by the GPT-5 family models, it generates text, code, and logical reasoning directly from your uploaded datasets. You can export a massive list of server errors, drop it into the chat interface, and ask the model to extract the top priorities for an immediate developer handoff.

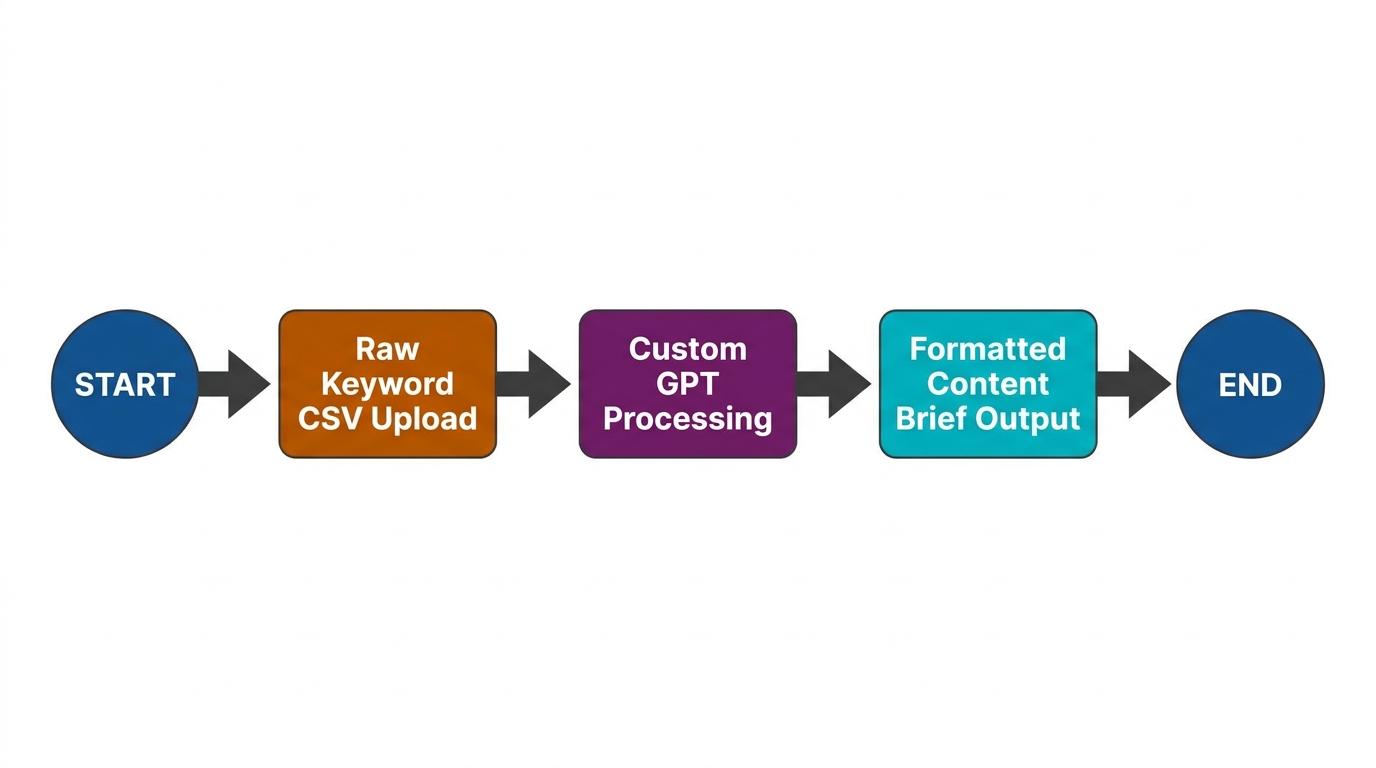

Custom processing environments

The platform supports the creation of custom GPTs and isolated team workspaces. Forward-thinking agencies build dedicated assistants pre-loaded with their specific brand guidelines, tone of voice rules, and technical formatting requirements. A strategist simply feeds the raw data to their custom instance and receives a perfectly structured outline in seconds.

Severe limitations in independent research

You'll guarantee strategic failure if you use this model for native search metrics. It completely lacks the ability to fetch reliable, unhallucinated search volume or organic keyword difficulty scores. If you ask the prompt interface for monthly search traffic estimates, it will confidently invent numbers that look plausible but hold zero basis in reality.

Heavy operational use also quickly hits rigid message caps across the standard tiers. You can easily burn through your limit during a complex data analysis session, freezing your workflow mid-task until the timer resets.

The integration verdict

Strategists use this strictly as a data processing engine rather than a discovery tool. It becomes a powerful workflow accelerator when fed verified external data from a tool like RankDots or Screaming Frog.

It's a mandatory addition to the modern stack, but remains a dangerous liability if trusted as an independent research source. Compare the Plus plan's strict limitations against the Pro tier's scaling costs to determine which level supports your agency's daily prompt volume.

Frequently asked questions

What is the most effective tool for SEO?

Which SEO tool is best for small businesses?

What are the best SEO tools for digital marketing agencies?

Can ChatGPT or other AI platforms effectively do SEO?

Which SEO tools are most accurate for rank tracking?

Are paid SEO tools worth the investment compared to free options?

Final workflow advice and conclusion

Connecting specialized solutions with general suites

No single platform handles every stage of a modern search campaign perfectly. Broad legacy suites provide excellent initial discovery and baseline technical health checks. They give you the broad market context necessary to understand where a domain stands against its competitors. However, they frequently lack the surgical precision required for hyper-local tracking or deep semantic content grading.

The most resilient agencies bridge this gap through deliberate data interoperability. You establish an anchor suite for broad search volumes and initial domain metrics. Then, you export that foundational data into specialized point solutions for granular execution. You force compromises on technical depth when you rely entirely on an all-in-one platform. You remain blind to broader market movements if you buy only specialized applications.

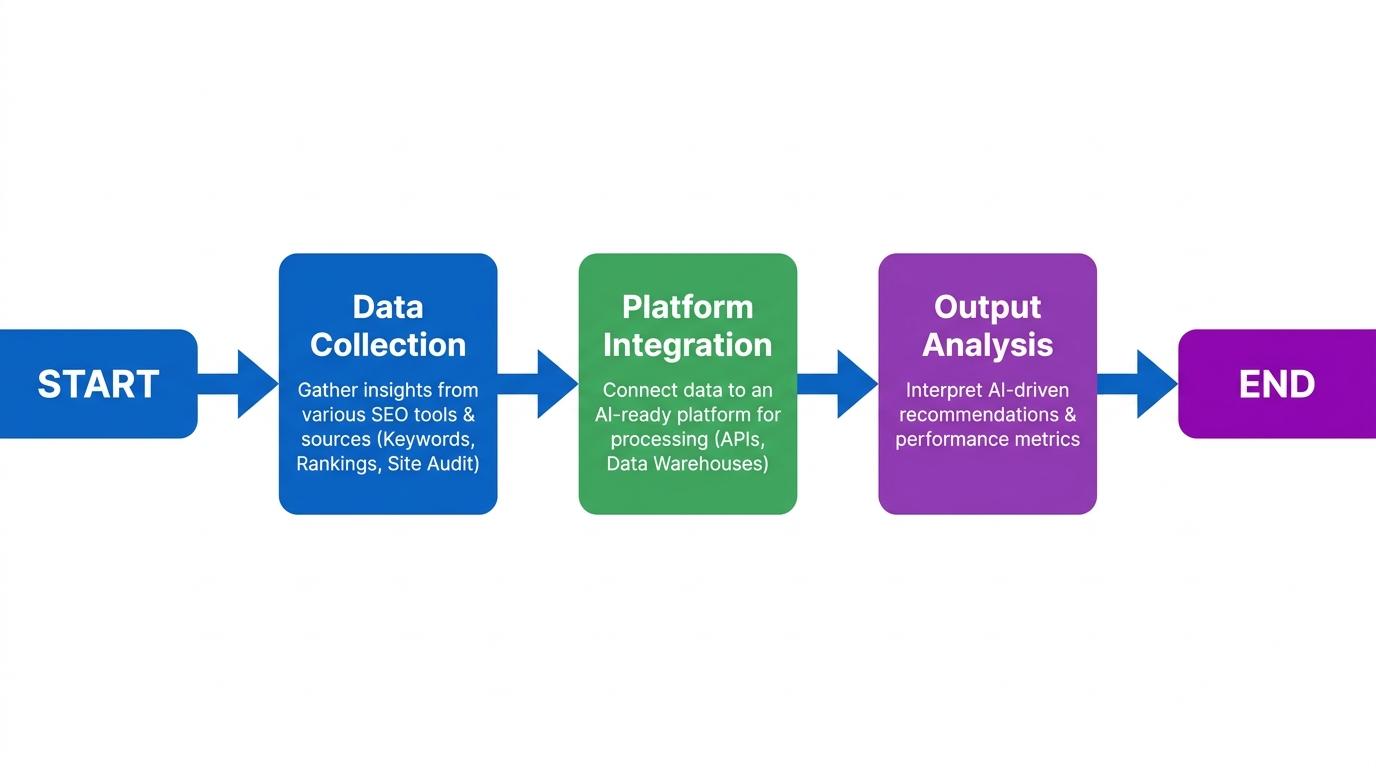

To bridge this gap, build a hybrid workflow:

- Use broad platforms as data warehouses to store foundational metrics.

- Use focused point solutions as execution engines to drive daily optimization.

Visualizing data before content execution

Raw data exports hold zero strategic value until you organize them into a logical site architecture. A five-thousand-row spreadsheet of targeted phrases usually guarantees keyword cannibalization and misaligned search intent when handed to a writing team. The critical operational step between initial discovery and actual drafting is visual mapping.

Consider a manager trying to consolidate tools while mapping an upcoming content pillar. They import their scattered keyword lists into RankDots to structure the plan, collecting up to 50,000 relevant keywords per project by reverse-engineering Google ranking patterns. They need to turn that raw data into a structured topical map while keeping a real-time pulse on actual search performance. Seeing hours of manual spreadsheet sorting transformed into an automated visual map removes massive administrative friction. When you group those terms by shared SERP overlap, every new page targets a distinct intent. This actively reduces internal competition before a single brief gets written.

Balancing agency budgets against daily utility

Software bloat quietly erodes campaign margins. The default approach to assembling top SEO tools usually involves securing premium licenses for every major platform on the market, only to use a fraction of their capabilities. Stop paying for overlap.

Evaluate your toolkit based on daily operational utility rather than theoretical feature lists. If your technical team only executes deep structural crawls once a quarter, paying a high monthly cloud subscription makes very little sense. A localized desktop crawler handles that exact job for a fraction of the cost. Conversely, if your strategy relies on tracking thousands of daily generative ranking fluctuations, investing heavily in a premium visibility engine is non-negotiable.

Audit your current software expenses. Identify the dashboards your team actually logs into every morning. Cancel the redundant subscriptions that overlap with your core platforms, and rebuild a streamlined stack designed specifically around your agency's actual workflow bottlenecks.

Stop juggling subscriptions and centralize your Top SEO Tools.

Turn scattered search data into structured, ready-to-execute topical maps. Automated clustering eliminates manual spreadsheet sorting so you reduce internal competition and launch profitable campaigns faster.