How to rank in a competitive niche without a massive budget

Most keyword difficulty scores are misleading.

You find a keyword with low competition, open the SERP, and immediately see giant brands dominating page one. Reddit. Forbes. HubSpot. Amazon. At that point, ranking can feel impossible without a massive backlink profile.

But that’s not how modern SEO actually works.

The problem is that traditional keyword difficulty metrics measure overall competition — not whether your specific site has a realistic chance to compete.

And that creates opportunity.

Even in crowded niches, dominant sites leave behind structural gaps: weak topical coverage, mismatched search intent, outdated pages, and low-relevance content clusters. Smaller websites can consistently outperform larger competitors by targeting those weaknesses strategically instead of chasing generic low-KD keywords.

In this guide, we’ll break down a 5-step framework for finding site-relative SERP opportunities, building targeted topic clusters, and generating organic traffic in competitive niches without relying on massive link-building campaigns.

Understanding site-relative competition vs. generic metrics

The false promise of generic difficulty

You pull keyword data from standard tools, target terms with low generic difficulty scores, and spend weeks writing content. Months later, you still can't rank. The standard metric failed because it didn't account for your specific domain strength. When checking keyword search volumes and difficulty scores in tools like Ahrefs, the data reflects global link averages, not your website's reality. Generic scores treat a fresh domain and a ten-year-old publication as equals.

Backlinko analyzed 11.8 million Google search results and found that a site's overall link authority strongly correlates with higher search engine rankings. Overarching domain authority has a more significant correlation with first-page rankings than the authority of the individual ranking page itself. Generic keyword difficulty scores are only a baseline. The engine prioritizes the domain over the document.

Shifting to site-relative evaluation

A low difficulty score gives false confidence if search engines still prioritize overarching domain strength for that specific query. We need a different benchmark. Instead of asking how hard a keyword is generally, ask how hard the keyword is for your exact website.

The answer lies in finding an easy-to-rank spot. Look for a top-10 position currently held by a site with lower authority than yours. If a specialized boutique marketing agency competes against large software directories, generic scores look terrifying. But if that agency finds a forum thread or a weaker local competitor sitting in position seven, a vulnerability exists. With RankDots, you evaluate SERP competition relative to your specific site's domain and page authority rather than using a generic difficulty score. Targeting relative weakness turns abstract competition into a practical hit list.

How to rank in a competitive niche in 5 steps

-

Audit the top-10 search results

Open an incognito browser window and search your target term. Check the domain authority of the first page results using a free SEO extension. You'll identify at least one weaker competitor currently ranking on the first page.

-

Extract competitor keyword gaps

Paste the specific URL of that weaker competitor into an SEO analysis tool to view their organic keywords. Filter the list to isolate conversational, multi-word queries. You've now compiled a raw spreadsheet of long-tail phrases currently driving their traffic.

-

Group queries by SERP overlap

Search your extracted phrases and compare the first-page URLs for each. Don't combine keywords onto the same target page unless they share mostly identical search results. The outcome is a structured content plan organized by distinct user intent.

-

Match format and inject expertise

Outline your draft using the exact content structure the search engine currently favors for that query. Add original data or specific field observations the weaker competitor lacks. The result is a comprehensive page ready to capture that SERP vulnerability.

-

Publish and build internal links

Publish your optimized page and add internal links from topically relevant articles on your site. This helps search engines discover your new content and passes existing authority to the page without requiring external backlinks.

Step 1: Assess true competition with site-relative metrics

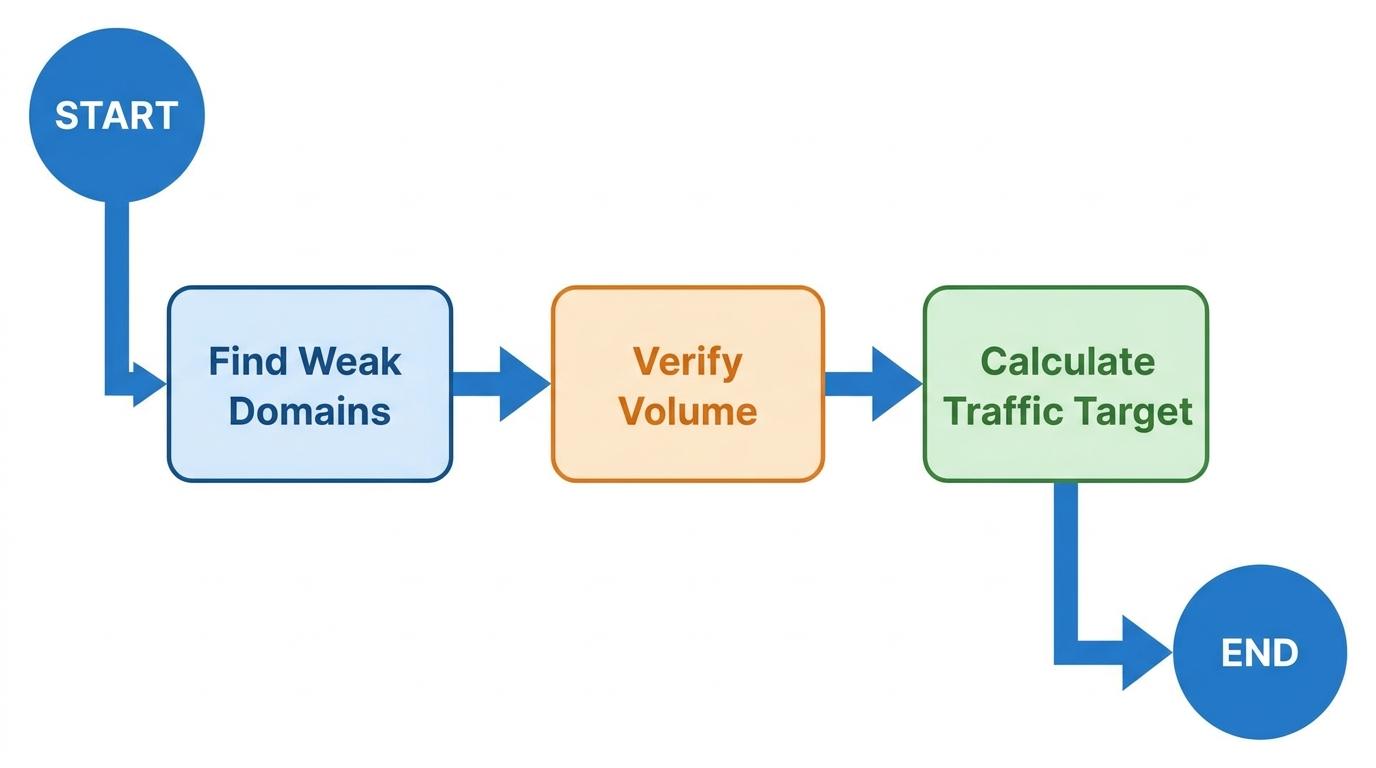

Calculate your top-10 vulnerabilities

Stop looking at aggregate difficulty scores and start counting vulnerable domains. Pinpoint exactly how many weaker sites currently rank in the top 10 for your target keyword to prove you have a legitimate chance to compete. If you find two domains weaker than yours on the first page, those are your targets. Count the weak spots. If the number is zero, move on.

Identify these specific vulnerabilities with a keyword intelligence platform that counts exactly how many of the current top-10 results come from sites weaker than yours. A number greater than zero confirms you can potentially displace them with great content without needing a massive backlink campaign. We look for at least one vulnerable spot before committing any content resources to a topic.

Validate volume only after confirming weakness

Most content workflows check search volume first. Reverse the order. Check search volume across winnable long-tail terms only after verifying a site-relative vulnerability exists. A high-volume keyword with zero weak spots is functionally worthless to a smaller domain.

Evaluate the easy-to-rank search volume, which calculates the total monthly searches across all the winnable keywords within a specific topic. That calculation shows the size of the prize if you target those accessible spots. For our boutique marketing agency, a niche term like "b2b saas content distribution agency" might have lower global volume but contains three weaker competitors on page one. We prioritize the accessible traffic over the theoretical maximum.

Estimate the viable traffic prize

Isolate the weak spots and calculate the estimated monthly traffic available strictly from displacing those weaker domains. Don't calculate the traffic for position one if position one is held by a legacy tech giant you can't unseat.

Traffic projections based on capturing position seven or eight set realistic expectations and focus your resources on achievable wins. Keep the math grounded in site-relative reality to avoid wasting content budget on unwinnable fights.

Step 2: Map the SERP for hidden weak spots

Visually assess the domain footprints

Open the search results and look at who occupies the first page. Open a visual SERP analysis tool to see competitor favicons directly in the Google top 10 view, letting you instantly gauge what caliber of brands currently dominate the first page.

If you see nine massive software directories and one small personal blog, you have found a crack in the armor. You're not trying to beat the directories. You're targeting the blog. Visual footprint mapping prevents you from fighting the wrong battle.

Identify structural format failures

Weaker domains often rank simply because the SERP lacks proper content formats. Look for structural weaknesses in the current results. Outdated timestamps from five years ago signal stagnation. Unstructured forum threads on Reddit or Quora indicate a lack of dedicated editorial content. Mismatched formats, like a generic product page ranking for a "how-to" informational query, show the search engine settling for a suboptimal result.

When a weak domain ranks with a structural flaw, search engines are desperate for a better option. A specialized boutique marketing agency can easily outrank a generic forum thread by publishing a comprehensive, updated guide on that exact topic.

Isolate competitor traffic drivers

Reverse-engineer the weak sites that made it to page one. Analyze their traffic to extract the specific low-competition keywords driving their organic wins. If a site with lower domain authority than yours holds position four, analyze that specific page URL to see what secondary terms it captures.

Competitor analysis platforms show the exact keywords driving their traffic and highlight the low-competition gaps they missed entirely. Finding the weak site is the first step. Extracting the exact keyword variations driving their traffic allows you to capture those remaining clicks.

Step 3: Extract conversational long-tail keywords

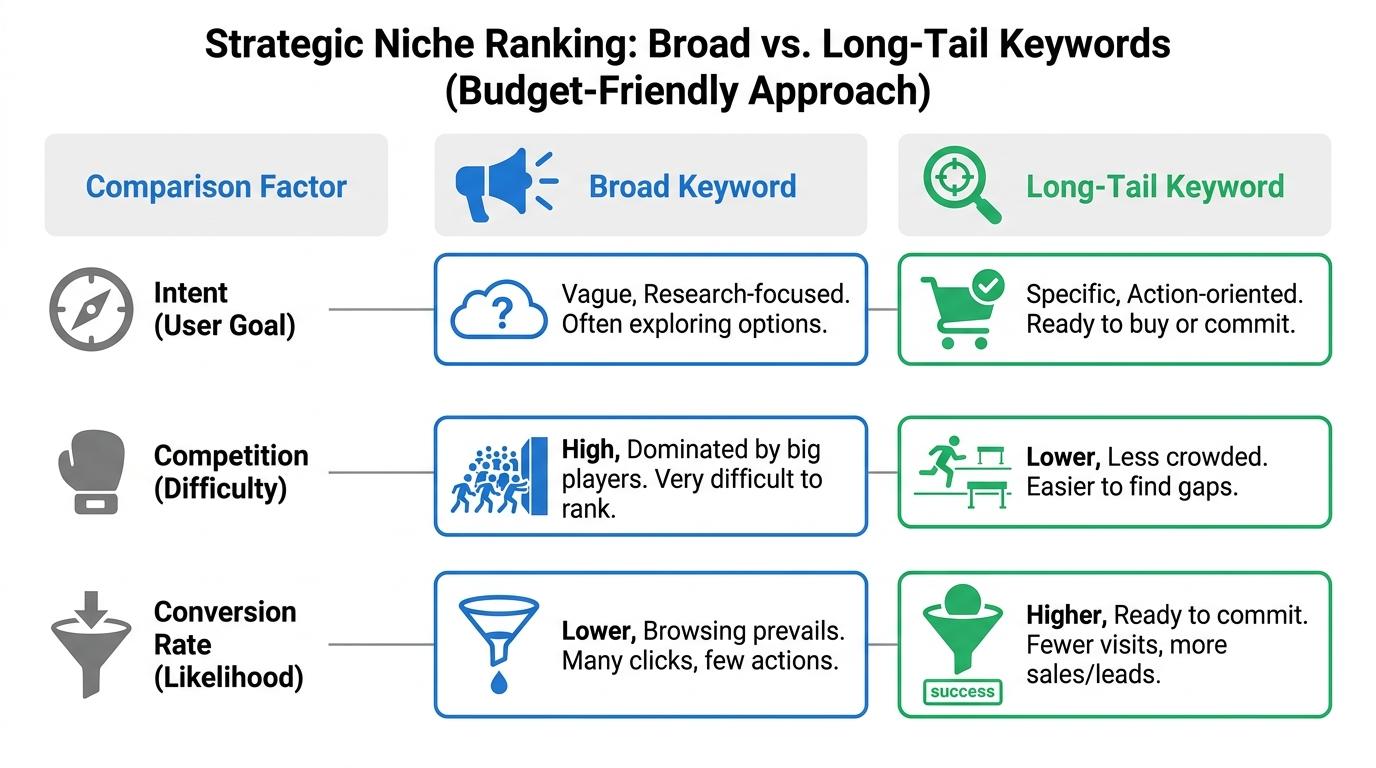

Map multi-word customer pain points

Broad keywords lack specific intent and attract the heaviest competition. Long-tail keywords survive because they reflect deep, concrete customer pain points. Identify exact, multi-word queries that users type when they're frustrated with generic solutions.

Our boutique agency should ignore a broad term like "marketing automation" and target "how to migrate from hubspot to activecampaign for b2b saas." The latter is conversational, highly specific, and frequently ignored by giant directories. Multi-word phrases convert better because the user knows exactly what they want.

Uncover competitor keyword gaps

Large competitors leave gaps because they focus heavily on broad, high-volume terms. Use keyword gap features in platforms like SEO PowerSuite, which offers tools like Rank Tracker to analyze competitor ranking keywords and uncover content gaps. Similarly, you can use SpyFu to identify organic keyword overlaps among competitors.

Run the weaker domains you found in the previous step through these gap analysis tools. You'll uncover overlapping opportunities that larger competitors ignore. The weaker domains are your scouts, highlighting which conversational terms the massive brands left undefended.

Filter for clear search intent

Every list of long-tail terms requires intent filtering. Prioritize terms showing distinct informational or transactional intent. What does someone typing "best marketing automation for 10 person team" actually want? They want a comparative review or a decision framework, not a vendor pricing page.

Pages still rank sometimes when we miss that distinction, but they don't convert. Group your extracted terms into distinct intent buckets before outlining the content. The gap between ranking and converting is almost always an intent-mapping failure.

Step 4: Build self-reinforcing topic clusters

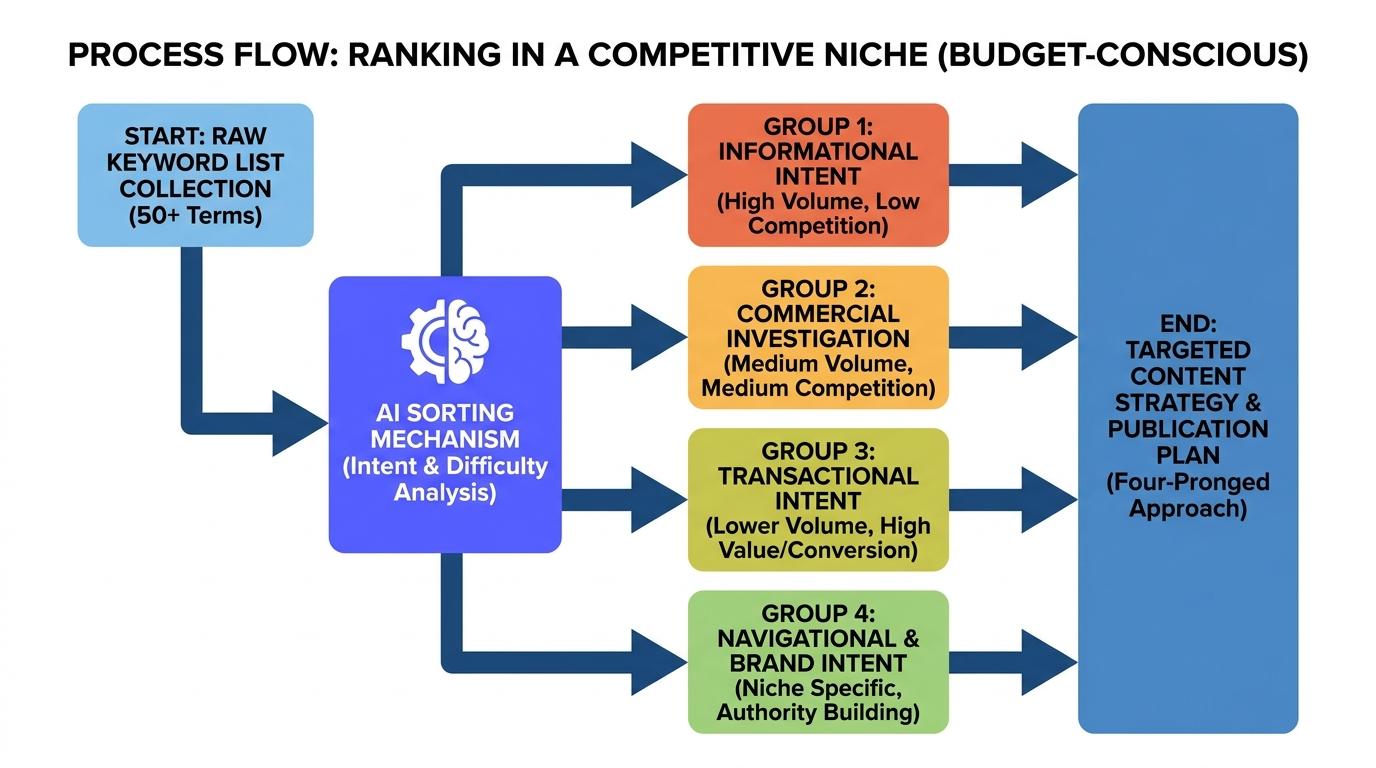

We extracted a list of conversational long-tail queries. Now we need to organize them. A standalone page targeting a single long-tail keyword rarely survives long against massive domains, even when you find a temporary SERP vulnerability. The defense mechanism against high-authority sites is a tightly woven topic cluster. Group individual, low-competition queries into logical clusters governed by search intent to build the localized relevance search engines reward.

Group queries by search intent, not string similarity

Novice SEO campaigns often group keywords based on matching words. They put "B2B SaaS marketing automation" and "marketing automation B2B case studies" on the same page simply because the strings look identical. That structural flaw creates pages that fail to satisfy what the user actually wants.

When you group keywords by shared SERP overlap, each page targets a distinct intent — reducing internal competition. If Google shows the exact same ten URLs for two different long-tail phrases, those phrases belong on the same page. If the search results show entirely different types of content, they require separate pages. Intent mapping dictates the architecture. For our boutique marketing agency, "automation tools for SaaS" demands a listicle, while "how to set up automation workflows" requires a tutorial. Trying to combine them dilutes relevance.

Automate grouping logic to eliminate manual mapping

Imagine an SEO manager tasked with building authority around the broad topic of "SaaS CRM migrations." They pull hundreds of low-competition keywords but freeze when deciding which terms demand dedicated pages and which function purely as secondary synonyms. Manually mapping that architecture takes days of cross-referencing spreadsheets, often resulting in accidental keyword cannibalization when two pages end up targeting the same underlying intent.

You can replace that guesswork with AI grouping logic. Modern clustering tools let you analyze real search engine results at scale, grouping terms exactly how the search engine already categorizes them based on URL overlap. You stop guessing. You start matching the engine's established parameters. Modern clustering workflows let you upload your entire list of extracted vulnerabilities and instantly see how many distinct pages you need to build.

Funnel relevance upward with internal linking

The cluster only works if authority flows efficiently between the pieces. A self-reinforcing architecture uses internal links to pass topical relevance from highly specific long-tail articles up to a broader, highly competitive core pillar page.

Create a comprehensive pillar covering the main topic, then build specific cluster pages for the long-tail vulnerabilities you identified. Link every specific cluster page directly to the main pillar using descriptive anchor text, and link the pillar back out to the supporting clusters. We've seen this exact hub-and-spoke configuration artificially inflate a smaller domain's perceived authority on a specific topic. You create a closed loop of extreme relevance that massive, generalized software directories cannot replicate without completely restructuring their massive sites.

Step 5: Optimize content for search intent and E-E-A-T

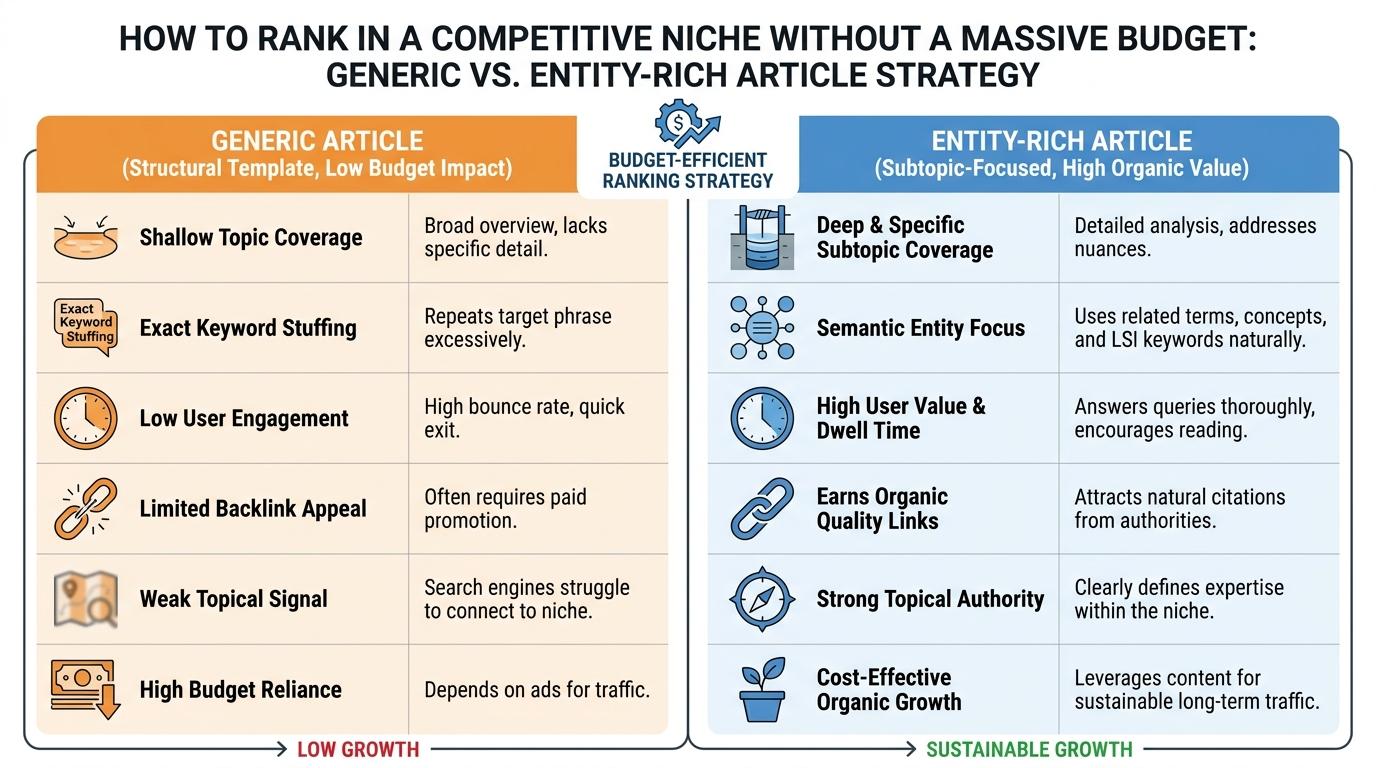

We found the weak spots and clustered the keywords. Now we write the actual pages. A content director looking at a cluster of winnable keywords often faces a strict bottleneck. They need to efficiently produce drafts that match the structure and depth of the top-ranking pages without spending days individually researching every single query. The solution requires balancing structural imitation with unique, firsthand expertise.

Match the expected structure of top results

Search engines rank specific formats for specific queries because user behavior proved that format works. Look closely at the weaker domains currently holding the easy-to-rank spots you targeted. Identify the underlying container they use to deliver information.

If the top results use a step-by-step tutorial format with comparison tables, we recommend building a step-by-step tutorial with better comparison tables. If the top results are dense glossaries, write a highly structured glossary. Match the container. Upgrade the insights. Breaking from the established structural expectation usually confuses the crawler and frustrates the user, even if your writing quality is objectively better.

Cover mandatory semantic entities

Search algorithms evaluate topical depth by checking for related concepts. If you write an authoritative guide on migrating CRM platforms but fail to mention "data export constraints," "custom field mapping," or "API call limits," the engine doubts your comprehensive expertise. We recommend injecting semantic relevance by covering the related entities natively expected for the topic.

Grade content relevance with tools like Clearscope, which analyze top results to build a required vocabulary list for your target topic. We usually recommend running your drafts through similar semantic analysis tools to catch obvious conceptual gaps. Hitting those semantic baselines proves to the crawler that your content belongs in the professional conversation. It's the minimum entry fee for ranking.

Inject firsthand experience to stand out from generic content

Hitting the semantic baseline gets your page indexed and evaluated. Winning the top spot requires something the massive generic directories simply don't possess: actual field experience. Large publisher sites rely heavily on high-volume, generalized content production. Smaller domains beat them using genuine Experience, Expertise, Authoritativeness, and Trustworthiness (E-E-A-T).

Embed concrete observations directly into the prose. For the boutique marketing agency, that means swapping out generic best practices for a specific paragraph detailing a friction point they encountered while migrating a real client last month. Include proprietary data points, screenshots of software configurations, or direct quotes from your internal specialists. Generic sites cannot fake specific implementation hurdles. The bots measure the semantic baseline, but sustained user engagement metrics reward actual, hard-won expertise.

Frequently asked questions

How do you rank in a competitive niche?

Can you compete with established brands without a large marketing budget?

Which SEO factor matters most in a crowded market?

How can you measure if a competitive SEO strategy is working?

Outsmart your competitors and capture profitable search traffic

Stop guessing which keywords to target and start finding specific search result vulnerabilities. Learning how to rank in a competitive niche becomes straightforward when you rely on site-relative data. Start by finding one weak domain on the first page.