How to find easy-to-rank keywords by targeting SERP weak spots

Everybody thinks you need large backlink budgets to rank for competitive terms, but there's an alternative strategy very few marketers consider. A Databox survey shows SEO professionals heavily rely on generic difficulty metrics, habitually abandoning higher-difficulty keywords, while SalesHive data indicates teams spend an average minimum of $8,400 monthly on backlinks to compete. You don't need that budget. To understand how to find easy-to-rank keywords, ignore generic difficulty scores and analyze the search results for specific vulnerabilities. Look for search engine results pages (SERPs) where the top-ranking sites have lower domain authority than yours, thin content, or weak backlink profiles, allowing you to outrank them purely through content quality.

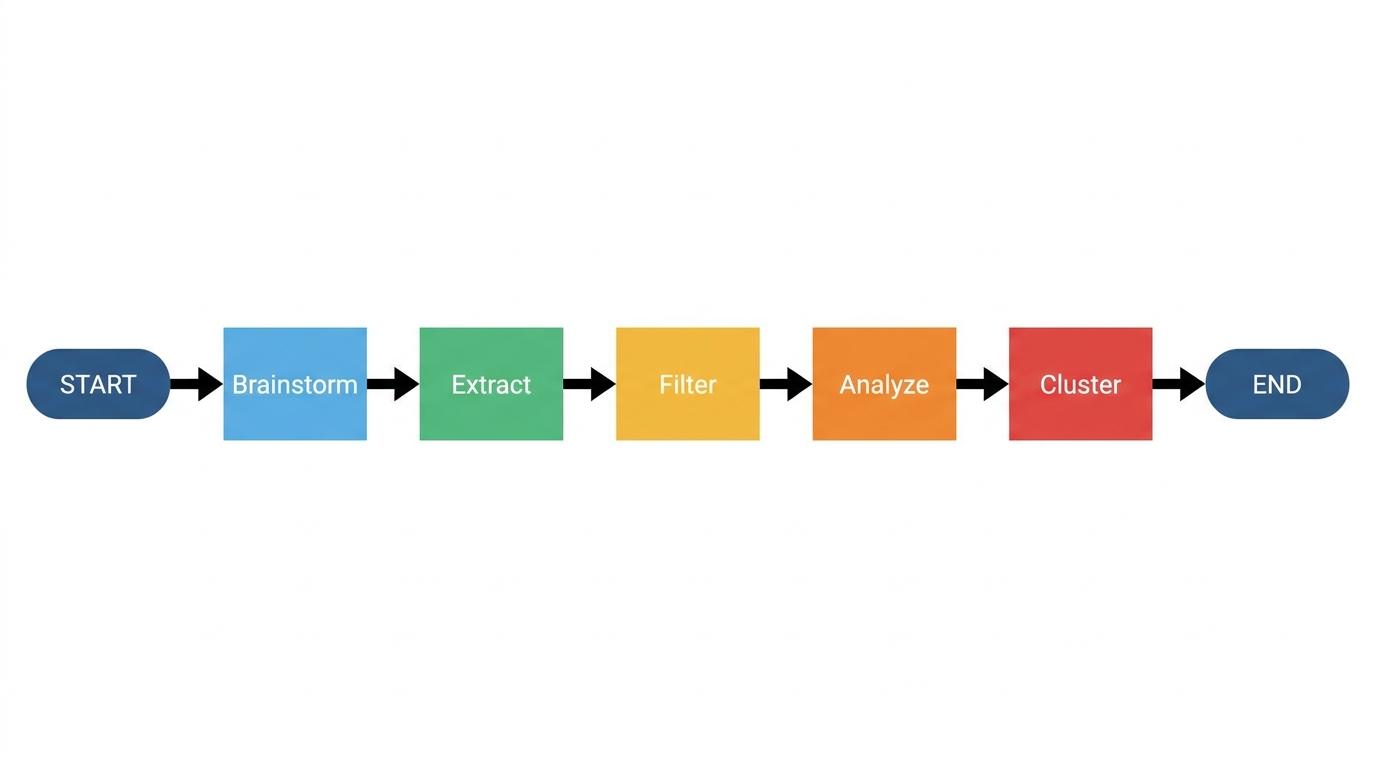

This guide covers a five-step methodology for identifying and capturing vulnerable search engine results based on your site's specific authority.

Understanding keyword difficulty and site-relative competition

The illusion of the 0-100 scale

Most mainstream SEO platforms calculate a single, static number to represent ranking difficulty. Semrush's keyword difficulty score analyzes over 10 parameters, including referring domains, authority scores, search intent signals, and SERP features. That metric typically ranges from 0 to 100 — Semrush data. But a low generic score doesn't guarantee a victory. An outdoor gear blog targeting a "low difficulty" tent review might invest a week into writing an article, only to encounter established outdoor magazines and get stuck on page four.

In our experience reviewing niche site failures, the common root cause is trusting a generic difficulty score of 20 when the SERP is already saturated with authoritative domains. Research by Ahrefs analyzing millions of pages reveals that while 96.55% of indexed content gets zero search traffic, the rare pages that rank with zero backlinks almost exclusively sit on large legacy websites. Equity passes down.

Defining site-relative difficulty

You need a metric that calculates the actual gap between high-authority sites and your specific domain. This concept is site-relative difficulty. It measures whether a search result is vulnerable to your exact website. It ignores the internet-wide average. When a niche site owner switches tactics to look for spots based explicitly on their own lower authority, they stop fighting uphill battles against legacy brands.

What makes a keyword easy to rank isn't a raw score, but the presence of specific, beatable pages in the top search results. Low difficulty keywords matter for new websites because targeting these exact gaps generates initial organic traffic while your overall domain authority is still developing.

Identifying high-intent low-competition keywords

The trap of binary search intent

Standard tools force a single label on a search query. Binary labels like "informational" or "transactional" fail to capture how people actually search. Picture an SEO manager preparing a content brief, unsure if a target term requires a standard blog post or a product review format because the intent appears mixed. When you rely on binary labels, you either miss the educational traffic or completely fail to monetize the page.

Verifiable intent distribution

We recommend structuring pages around verifiable intent percentage distributions. With RankDots, you can calculate exact distributions instead of single labels — seeing, for example, a query as 55% informational and 30% commercial. This granularity shows exactly how to blend informational depth with commercial conversion paths on a single page.

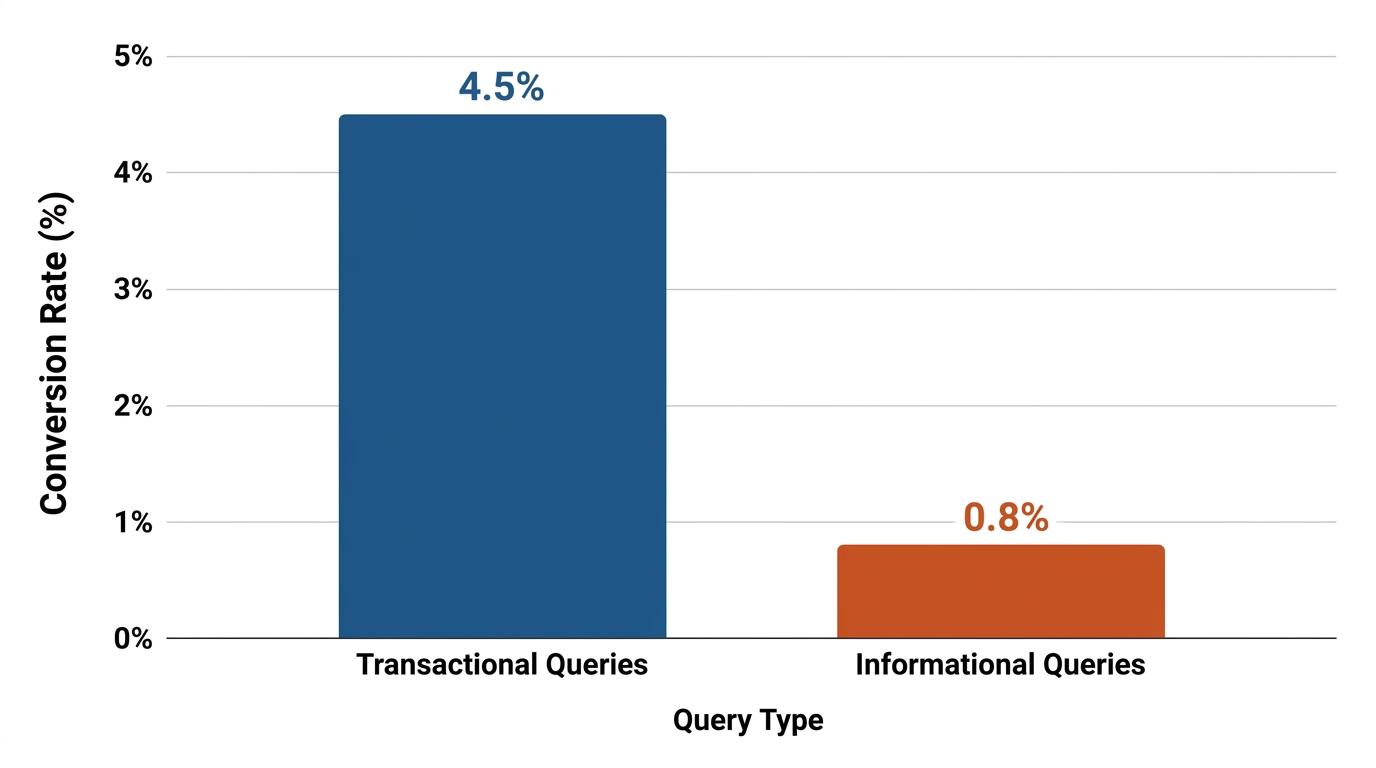

Your site makes money when you balance achievable low-competition traffic against actual commercial value. Industry benchmarks from WordStream reveal that landing pages targeting transactional search queries routinely achieve conversion rates of 2% to 5% or higher, whereas purely informational pages struggle below 1%. A blended approach secures traffic while maintaining a path to revenue. Intent mapping drives revenue.

Step 1: Brainstorm seed topics and concepts

Start by expanding your core business offerings into broad topic buckets before looking at granular keyword metrics. A niche outdoor blog shouldn't just plug "tents" into a tool; it should map out "cold weather camping setups" and "ultralight trail gear" as foundational seed categories. A content director trying to run a large batch of long-tail queries will often hit a hard limit imposed by legacy SEO suites. Broad initial categorization prevents this resource drain.

Pull broad market terminology from major ad platforms and legacy databases. You can use tools like Google Keyword Planner to generate keyword ideas and related search terms by analyzing actual paid search behavior. You can also source broad cluster terminology from platforms like Moz.

With over 1.25 billion keyword suggestions available across major databases, you want to capture the widest possible terminology set first. Prioritize these initial topic clusters based on your current business objectives and inventory. Ignore raw search volume at this stage. The goal here is idea volume, not search volume.

When selecting free and paid tools for keyword research, prioritize platforms that cast the widest possible net. Free options like Google Keyword Planner provide foundational volume, while paid databases reveal competitor terminology. RankDots pulls from multiple data sources simultaneously (including autocomplete and related searches) so you don't miss hidden long-tail opportunities before filtering.

Step 2: Extract keyword ideas from competitors

Identify competitor sites that share your approximate domain strength and target audience. If your outdoor gear blog has a lower domain rating, find other mid-tier sites doing well. Don't extract keyword targets from market leaders. Those legacy sites rank based on historical domain authority. Their established backlink profiles usually outweigh new content.

Execute a competitive gap analysis to pull specific terms smaller competitors already rank for successfully. Platforms like Ahrefs, where the Keywords Explorer tool supports keyword clustering and difficulty analysis, allow you to cross-reference multiple competitors simultaneously. We frequently see this gap analysis uncover terms you never realized your audience actively searched.

Watch for warning signs during extraction. If a competitor ranks for a term but their page has hundreds of exact-match referring domains pointing directly to it, abandon the keyword. You want terms where the competitor ranks on page relevance, not artificial link velocity.

Step 3: Filter for site-relative difficulty metrics

Applying personalized filters

Stop relying on generic search volume limits. Apply filters that isolate topics where the current top-ranking pages are statistically weaker than your domain. With RankDots, you can calculate competition relative to your specific website's domain and page authority to see which competitor pages are genuinely strong and which are legacy rankings you can displace. You calculate the mathematical distance between your site and the weakest competitor in the top ten, turning a broad spreadsheet into a prioritized content calendar.

The displacement mechanism

Displacing a legacy ranking works mechanically. You identify a generic page ranking primarily on site-wide authority, ignoring tight topical relevance. You publish a highly targeted, intent-matched article. The search engine eventually tests your specific page against the broad legacy page for user engagement signals. Relevance wins the specific query.

Prioritizing the workflow

If you need faster organic growth for a new project, prioritize the content calendar by sorting your page ideas by a dedicated metric. The "Easy-to-Rank Spots" workflow in RankDots explicitly computes the difference between what is easy for a large site versus a smaller site. Focus your writing resources entirely on immediate organic traffic potential.

To execute this workflow, navigate to the Pages view in your project and sort the generated page ideas by the Easy Traffic Wins metric. Apply a filter to isolate pages where the easy-to-rank spots are greater than zero. This generates a list of topics where the current top 10 search results contain domains weaker than your own, so you can see exactly which keywords to capture based on content quality alone.

Step 4: Analyze SERP vulnerabilities and weak spots

Spotting the visual anomalies

Open the search results for your filtered keywords to confirm literal weak spots. While researching a promising topic, finding a wall of e-commerce and encyclopedia sites is highly intimidating. You can't easily visualize competitor dominance without manually clicking through every search result on Google to check page metrics.

You can resolve this through visual competitor intelligence in RankDots, which displays the favicons of the websites currently ranking in the top 10 or 20. A quick scan of these favicons shows whether retail giants hold the top spots or if smaller niche blogs are successfully holding positions.

Confirming beatable weak spots

Look for outdated content, forum threads, or thin articles ranking on page one. With RankDots, you can identify weak spots in search results where top-ranking pages have low authority or weak backlink profiles. That signals a clear vulnerability. When you see a Reddit thread or a neglected 500-word post from four years ago holding the fourth position, you've found a target. You can outrank those specific pages purely through comprehensive content quality without acquiring new backlinks.

When you target these gaps directly, you solve the problem of ranking for competitive keywords without backlinks. You bypass the need for an established link profile by delivering superior, intent-matched content to an exposed SERP vulnerability. If you're wondering how long it typically takes to rank for a low difficulty keyword using this method, the timeline is often shorter than traditional SEO. While search engines vary, highly relevant pages targeting verified weak spots frequently begin capturing traffic within a few weeks.

How to find easy-to-rank keywords in 5 steps

-

Generate a broad keyword universe

Type broad category terms into a database like Google Keyword Planner to gather a large list of phrases. You'll generate an unrefined list of related search queries spanning different search intents.

-

Extract mid-tier competitor targets

Run a gap analysis against niche competitors that share your approximate domain authority, explicitly avoiding large legacy brands. This yields a targeted list of search terms proven to rank without enterprise backlink budgets.

-

Filter by site-relative difficulty

Open your workspace and apply a filter to isolate queries where the Easy-to-Rank Spots metric is greater than zero. This pinpoints topics where current top results contain domains mathematically weaker than yours.

-

Visually inspect SERP vulnerabilities

Scan the competitor intelligence favicons for your filtered keywords to spot outdated forum threads or neglected niche blogs. This visual check confirms you've found a vulnerable search result lacking major publishers.

-

Group targets into topic clusters

Group the remaining vulnerable targets into topic clusters based on the searcher's actual intent. You walk away with a structured, ready-to-write content calendar focused entirely on achievable organic traffic.

Step 5: Build content clusters for topical authority

Group related long-tail terms to prevent content overlap and cannibalization. Use a system to group related search terms into ready-to-write semantic page ideas so you don't have to stare at a raw spreadsheet of 10,000 individual queries. That structure shifts the focus from managing keywords to managing content assets.

You can use RankDots to automatically group related keywords into logical topic clusters. Each topic cluster maps directly to a recommended page based on shared search intent. This setup supports a pillar-and-cluster website architecture, where core guides link logically to specific, low-competition subtopics.

Move from raw clustered keyword lists to actionable, structured page ideas ready for content creation. Focus on the clusters that show multiple SERP vulnerabilities across several related terms to capture an entire subtopic quickly.

Frequently asked questions

What makes a keyword easy to rank?

Why do low difficulty keywords matter for new websites?

How can you rank for competitive keywords without backlinks?

How long does it typically take to rank for a low difficulty keyword?

What are the best free and paid tools for keyword research?

Next steps for your keyword strategy

This approach to how to find easy-to-rank keywords fundamentally changes how you allocate writing resources. The shift from relying on generic difficulty scores to targeting site-relative SERP vulnerabilities eliminates the traditional guesswork. You stop competing against massive domains and start displacing weaker articles based on content relevance.

Take this methodology and apply it to your current content calendar immediately. Identify your broad seed topics, filter the data by your specific domain strength, visually verify the SERP vulnerabilities, and group the remaining targets into cohesive clusters. Execute that process consistently to capture traffic.

Find easy-to-rank keywords based on your site's actual authority.

Stop trusting generic difficulty scores that mask true competition. Calculate the exact authority gap for your specific website to uncover vulnerable search results. Start building topical relevance in areas where you can actually win.