How to build a data-driven Conversion Rate Optimization Strategy

You're pouring budget into traffic acquisition, but a leaky lower funnel quietly drains your ROI before users even reach the checkout page. A conversion rate optimization strategy relies on strict quantitative analytics and qualitative behavioral data. You identify actual friction points, prioritize testing hypotheses based on revenue impact, and implement validated improvements that lower your acquisition costs.

Recent studies from SimplicityDX show Customer Acquisition Costs (CAC) have increased by 60% over the last five years across B2B and eCommerce sectors. Some longer-term analyses indicate these acquisition costs have surged by as much as 222% over the past decade. An outdoor gear brand running expensive search ads for high-ticket items cannot afford a slow-loading landing page that spikes the bounce rate. That technical friction heavily increases acquisition costs by causing premature abandonment before the user ever reads the core value proposition. Generic UX checklists fail when profit margins face this much pressure.

Audit your site, prioritize tests, and consistently turn existing traffic into revenue using this seven-stage systematic framework.

Defining conversion rate optimization and baseline metrics

You can't optimize what you haven't accurately measured. A reliable optimization program requires separating vanity metrics from revenue drivers and establishing a clear mathematical starting point before running a single test.

Distinguishing macro from micro conversions

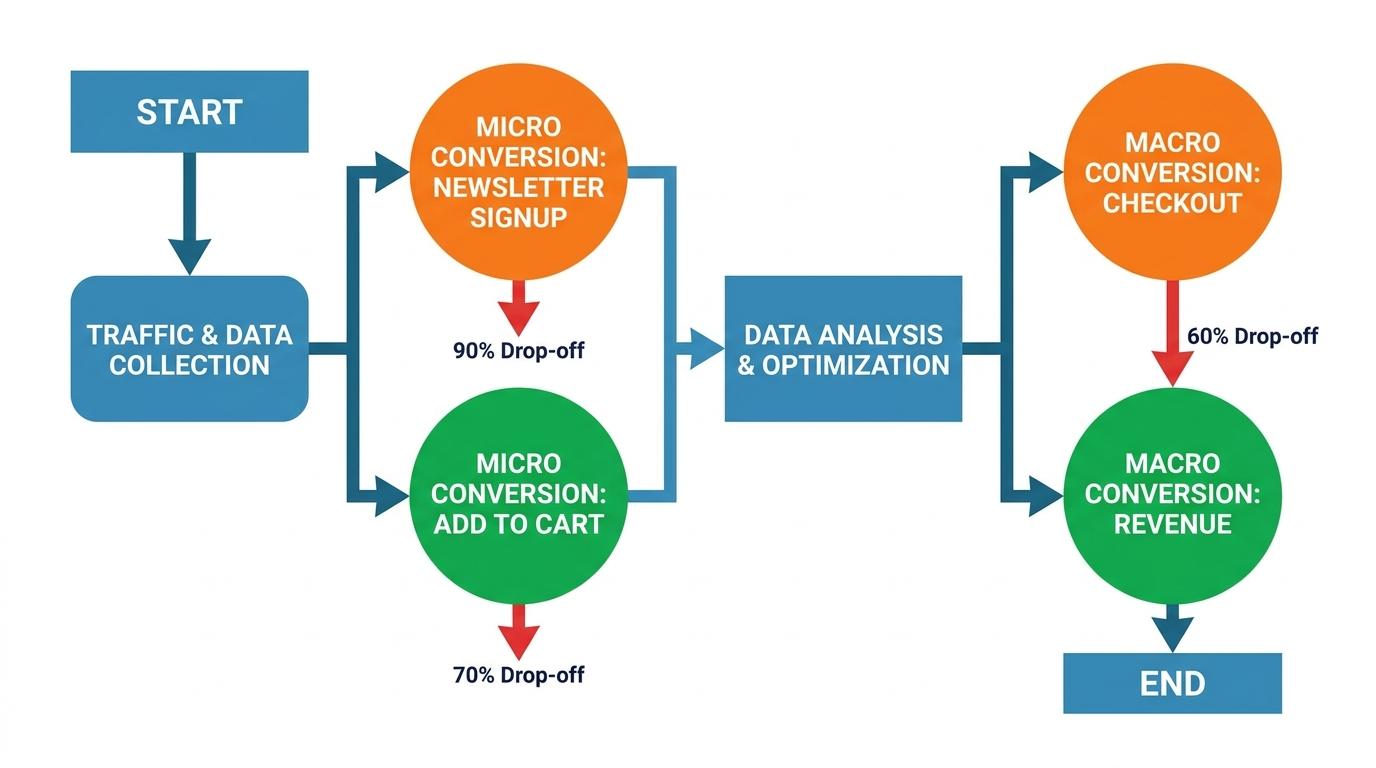

Most teams look at a single blended conversion rate in their primary analytics dashboard and assume it tells the whole story. When you treat all user actions as equal, you hide the specific friction points causing cart abandonment. We typically divide tracking goals into two distinct categories: macro and micro conversions.

Macro conversions represent the primary business objective of the page. For an ecommerce site selling outdoor gear, this is a completed purchase. For a B2B service, it's a qualified lead submission. These actions directly impact revenue.

Micro conversions are the incremental steps a user takes toward that primary goal. Adding a tent to the shopping cart, filtering hiking boots by size, or signing up for the trail-guide newsletter all indicate intent. Micro conversions tell you exactly where the user journey stalls. Healthy add-to-cart rates paired with low purchase rates indicate the product page works but the checkout flow needs fixing.

Establishing reliable baselines

An ecommerce manager reviewing monthly analytics might see a stagnant 1.8% overall conversion rate and wonder if a complete site redesign is necessary. Without a reliable baseline context, it's impossible to determine if that metric represents a critical emergency or standard seasonal variance.

Calculate your baseline using unique users rather than total sessions. A user who visits your site four times before buying a $400 sleeping bag should count as one converted user, not three failed sessions and one success. Measuring performance by sessions artificially depresses your conversion rate and misrepresents how buyers behave during considered purchases. Always segment this baseline by traffic source and device type. High-intent paid search traffic will convert entirely differently than top-of-funnel organic social traffic, and desktop users almost always convert at a higher, more consistent rate than distracted mobile users.

Contextualizing industry benchmark averages

The average conversion rate across all fourteen industries is 2.9%. Marketing teams often panic when their site sits at 2%, or celebrate prematurely when they hit 3.5%.

The median conversion rate across all industries is 6.6%. Averages obscure the massive variance between high-volume, low-ticket impulse buys and considered purchases. A store selling $15 water bottles will naturally convert a higher percentage of visitors than a store selling $1,200 kayaks. Use industry benchmarks to anchor your expectations, but measure success exclusively against your own historical baseline. The goal is consistent improvement of your own metrics, not beating a generic industry average that includes entirely different business models.

Conducting behavioral and heuristic evaluations

Quantitative data tells you where users are leaving, but qualitative data explains why. Moving from guesswork to a structured strategy requires shifting your focus from what to change to how you decide what to change.

Spotting friction with session recordings and heatmaps

Website owners often install a free behavior tracking tool and spend hours watching users rage-click on non-clickable product features without knowing how to fix the underlying issue. Raw behavioral data without a structured, prioritized testing plan just creates technical overwhelm. To move from observation to execution, you need specific parameters for what constitutes actionable user friction.

Several platforms now capture this exact behavior, so you don't have to guess where friction occurs. Microsoft Clarity provides unlimited heatmaps and session recordings to track specific user navigation paths across your entire domain. Mouseflow automatically generates six distinct heatmap types to show exactly where attention wanes and which form fields cause hesitation. Hotjar generates visual heatmaps and scroll maps that reliably reveal false bottoms—areas where users mistakenly believe a page has ended because of awkward white space, heavy visual breaks, or misaligned CSS containers. These tools replace subjective design opinions with objective behavioral truth.

Look for specific friction markers rather than watching user recordings aimlessly. Rage clicks consistently indicate confusing UI elements or broken JavaScript components. Rapid, erratic cursor movement often signals immediate confusion or frustration with complex, dense pricing tables. U-turns occur when a user clicks a navigation link and immediately hits the back button, signaling a severe mismatch between the link text and the actual destination content. These patterns matter because they highlight actual, documented user struggle rather than theoretical UX flaws.

Running structured heuristic walk-throughs

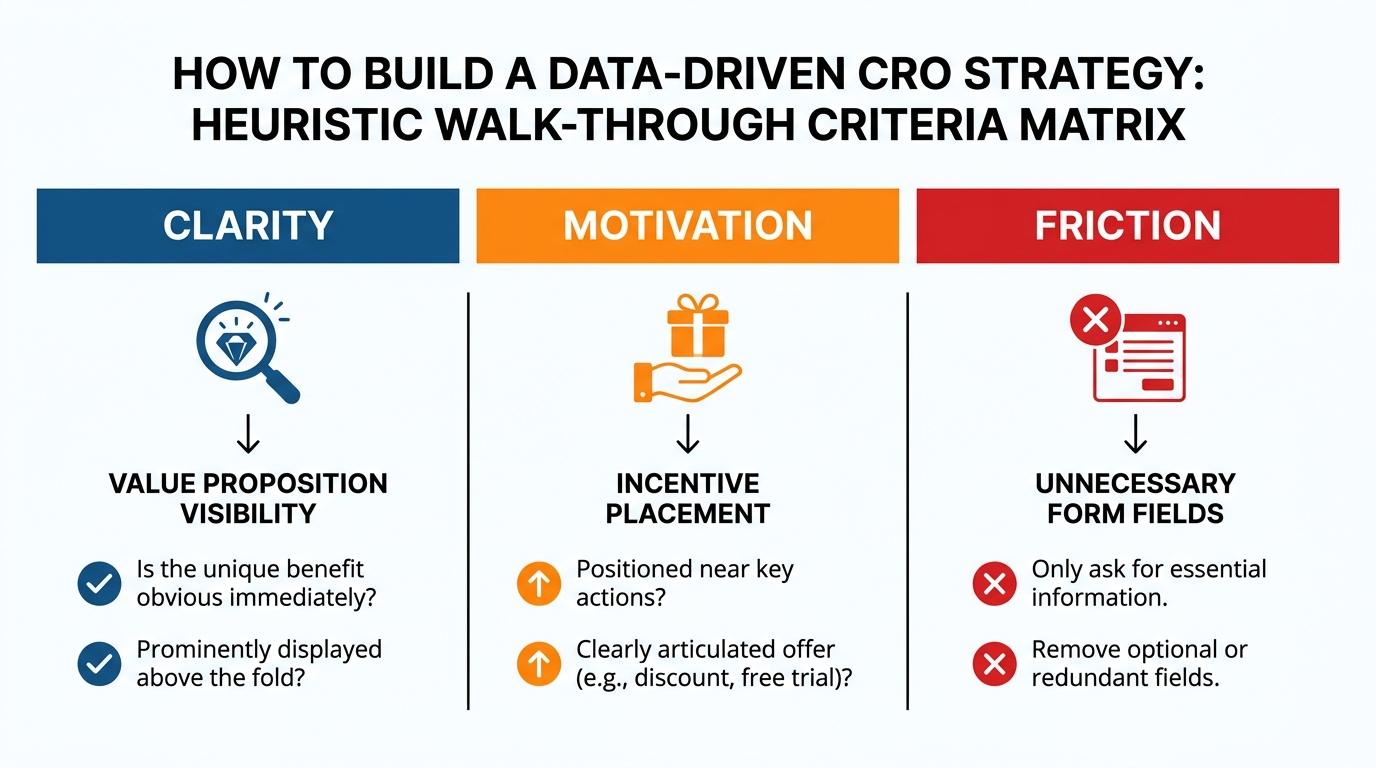

Software can't evaluate the persuasive quality of your copy. A heuristic walk-through requires your team to manually navigate the site while evaluating specific psychological and functional criteria.

Evaluate pages across three specific functional dimensions: clarity, motivation, and friction. For clarity, check if a first-time visitor can completely understand what you sell and who it serves within five seconds of loading. Motivation examines whether the page provides a compelling, immediate reason to act right now, such as limited stock indicators or a highly visible value proposition. Finally, friction identifies any functional element—like aggressive pop-ups or mandatory account creation—that makes the desired action significantly harder than it needs to be.

Walk through your checkout process on a mobile device while connected to a slow cellular network. The experience of trying to enter a credit card number into a non-responsive field while the page jumps around will expose friction points that dashboard metrics completely miss.

Documenting anomalies before designing solutions

The most common mistake is jumping straight from a frustrated user to a new design. You see users struggling with the shipping calculator, so you immediately brief a developer to build a new widget.

Write down the problem first. Document the specific behavior, the page where it occurs, and the device type involved. Framing the observation as a distinct problem ensures your eventual hypothesis addresses the root cause rather than just treating a symptom. If users ignore the shipping calculator, the problem might not be the widget's design. The text might be too small, or the placement might be below the fold on mobile screens. Writing down the problem separates the diagnosis from the prescription.

Prioritization frameworks: Using PIE and ICE models

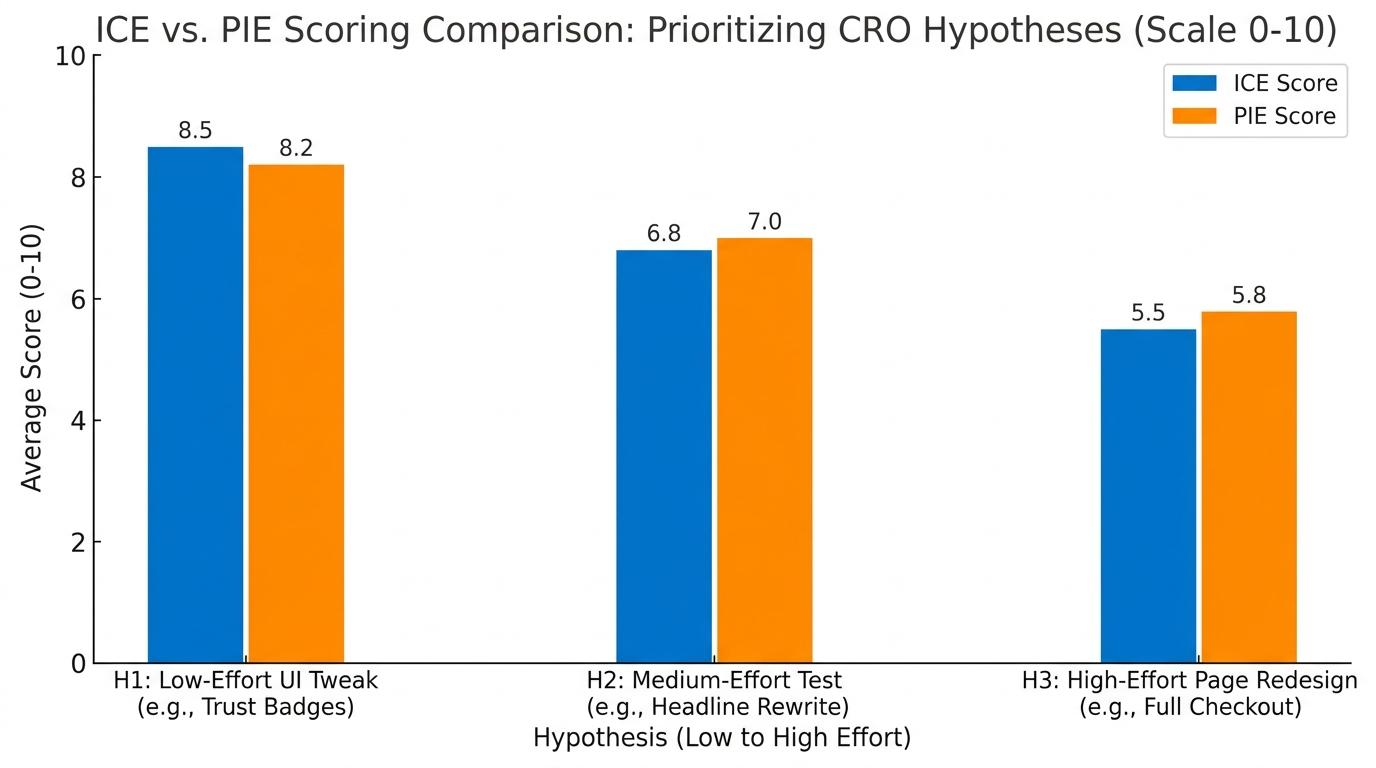

Every marketing team has far more testing ideas than they have developer hours available to execute them. Without a strict mathematical model to rank these hypotheses, the testing queue inevitably devolves into a political battle where the highest-paid person's subjective opinion wins. You need a framework that forces objectivity.

Scoring hypotheses with the PIE framework

The PIE framework evaluates every potential test across three distinct criteria on a scale of one to ten. It removes emotion from the planning process and forces teams to justify their ideas with logic.

Your Potential score estimates the realistic improvement ceiling for a specific page. A checkout page with an 80% drop-off rate scores a nine or ten because the ceiling for improvement is massive. A well-optimized product page converting at 15% might only score a three.

Your Importance score factors in traffic volume and the business value of the affected pages. Modifying the primary navigation menu scores high on importance because it impacts every visitor. Tweaking the layout of a legacy blog post with twelve monthly visits scores a one.

Your Ease score asks how many technical and organizational resources the test requires. Changing the color of an 'Add to Cart' button requires three minutes of a marketer's time in a visual editor, scoring a ten. Rebuilding the entire cart logic requires four weeks of backend developer sprints, scoring a two.

Average the three numbers together to generate a final composite score. The ideas with the highest overall scores move directly to the front of the testing queue. The final ranking ensures you tackle the lowest-effort, highest-impact changes first, banking early wins to build momentum.

The ICE framework alternative

Some teams prefer the ICE framework, which replaces Potential and Importance with Impact and Confidence while retaining the Ease metric. It operates on a similar ten-point scale but shifts the focus toward historical data.

Your Impact score estimates the effect on your core conversion metric.

Confidence forces a reality check. It asks how sure you are that the change will work, based strictly on previous test results, behavioral data, or clear user feedback. You can't score a nine on confidence just because you read a blog post about a tactic. High confidence requires empirical evidence that the specific friction exists on your site.

Aligning cross-functional teams around data

Designers want beautiful interfaces. Developers want stable, maintainable code. Marketers want immediate revenue impact. These competing priorities stall optimization efforts.

A strict scoring framework changes how teams interact. When a digital marketing director demands a massive homepage redesign because they dislike the aesthetic, the framework forces a conversation about ease and impact. If a high-effort redesign scores lower than a low-effort checkout fix, the data dictates the schedule.

The math settles the debate. Prioritization models ensure your team spends its limited resources fixing the exact leaks that cost you the most money.

PIE Versus ICE Framework Comparison

| Comparison Point | PIE Framework | ICE Framework |

|---|---|---|

| Core Variables | Potential, Importance, Ease | Impact, Confidence, Ease |

| Primary Driver | Traffic volume and page value | Empirical evidence and past results |

| Data Requirement | Baseline traffic and basic analytics | Historical test data and benchmarks |

| Strategic Edge | Isolates highest potential revenue ceilings | Prevents low-probability testing waste |

Step-by-step A/B testing workflow and hypothesis design

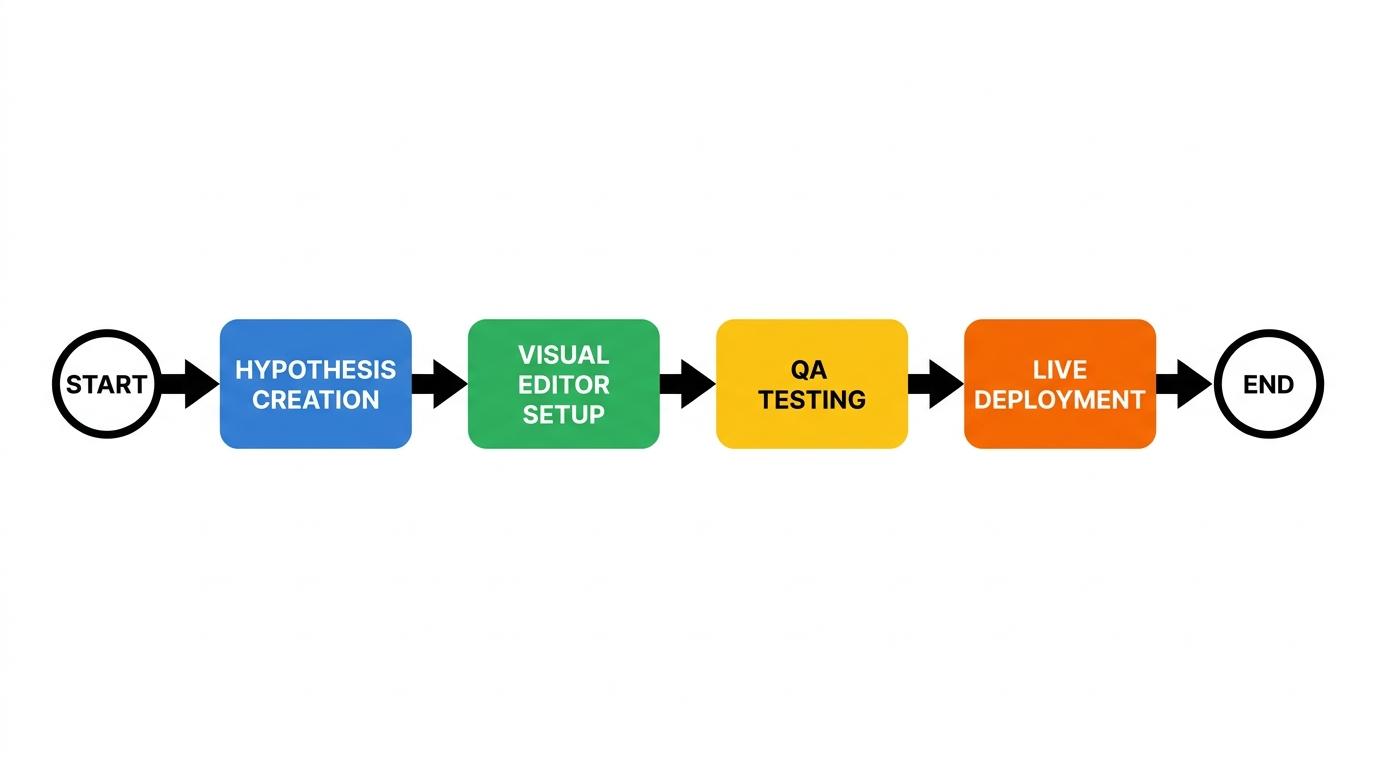

Your prioritized list of friction points is just a backlog until you actually test it. Moving from analytical observation to live execution requires a structured testing workflow that separates viable, data-backed experiments from arbitrary design changes. Far too many teams throw a new button color onto a page just to see what happens. That unstructured approach wastes valuable traffic and provides zero lasting institutional knowledge.

Structuring a testable hypothesis

We start every split test with a formal hypothesis. Use a simple structural format to force clarity: 'If [Variable], then [Result], because [Rationale].'

The variable is the specific element you plan to change. The result is the metric you expect to improve. The rationale grounds the test in the behavioral data you gathered during your heuristic evaluation.

Skip vague proposals like 'let's redesign the pricing table' and write a strict, testable statement. 'If we default the pricing toggle to annual billing, then we will increase average order value by 15%, because heatmaps show users currently ignore the annual discount banner.' This format dictates exactly what you will build, defines the precise metric you intend to move, and establishes exactly how you will judge success or failure. It prevents scope creep and ensures the development team knows the exact behavioral problem they are solving.

Balancing velocity with technical constraints

Many marketing teams evaluating testing software find themselves blocked by six-figure enterprise contracts for top-tier platforms. They have hypotheses ready to go but lack the budget for massive tech deployments. We've found you can run a highly effective testing program without that overhead.

Developer bottlenecks frequently stall testing velocity. When a marketing manager wants to improve a core landing page, waiting three weeks for frontend engineering availability kills programmatic momentum. You need tools that allow marketing teams to deploy straightforward changes independently. VWO lets you run visual A/B and multivariate tests without touching the code layer. Crazy Egg pairs native A/B testing with a visual editor so you can manipulate DOM elements directly on the live page. For completely net-new experiences, using a dedicated drag-and-drop page builder like Unbounce lets you spin up a fresh variation and launch a split test in a matter of hours.

Choose the tool that matches your team's technical reality. The best platform is the one that lets you launch tests frequently.

Running pre-launch quality assurance

Launching a broken test corrupts your data and damages the user experience. A misaligned CSS element on mobile can artificially suppress the variant's conversion rate, leading you to reject a hypothesis that actually works.

Always run QA checks before pushing traffic to a new variant. Verify the layout on actual mobile devices, not just browser emulators. Test the page on a throttled 3G connection to ensure your testing script doesn't cause a severe flicker effect before loading. Submit test transactions through your modified checkout flow to confirm macro conversions still track correctly in your analytics platform. Pre-launch technical checks save weeks of wasted traffic.

Aligning conversion rate optimization with SEO intent

Traffic acquisition and conversion optimization often operate in silos. The SEO team focuses on ranking for high-volume keywords, while the CRO team tries to force those visitors through a generic funnel. In failing campaigns, the disconnect almost always starts here.

Mapping landing pages to query intent

What does someone typing 'best waterproof hiking boots' actually want to see? Probably a comparison guide or a filtered category page. If your search ad drops them onto a specific product page for a single brand of boot, you have violated their search intent.

Align your landing page copy directly with the specific query that drove the click. The headline must match the exact promise made in the search snippet to maintain momentum. If the user searched for 'fastest CRM implementation,' your above-the-fold copy should aggressively highlight your 24-hour setup time rather than generic software features. When the destination fails to mirror the searcher's original intent, users bounce immediately. In our experience, the gap between ranking well and actually converting is almost always an intent-mapping failure, not a content quality issue.

The compounding impact of technical speed

Slow pages block conversions before your copy even loads. Technical friction directly reduces the ROI of your acquisition budget.

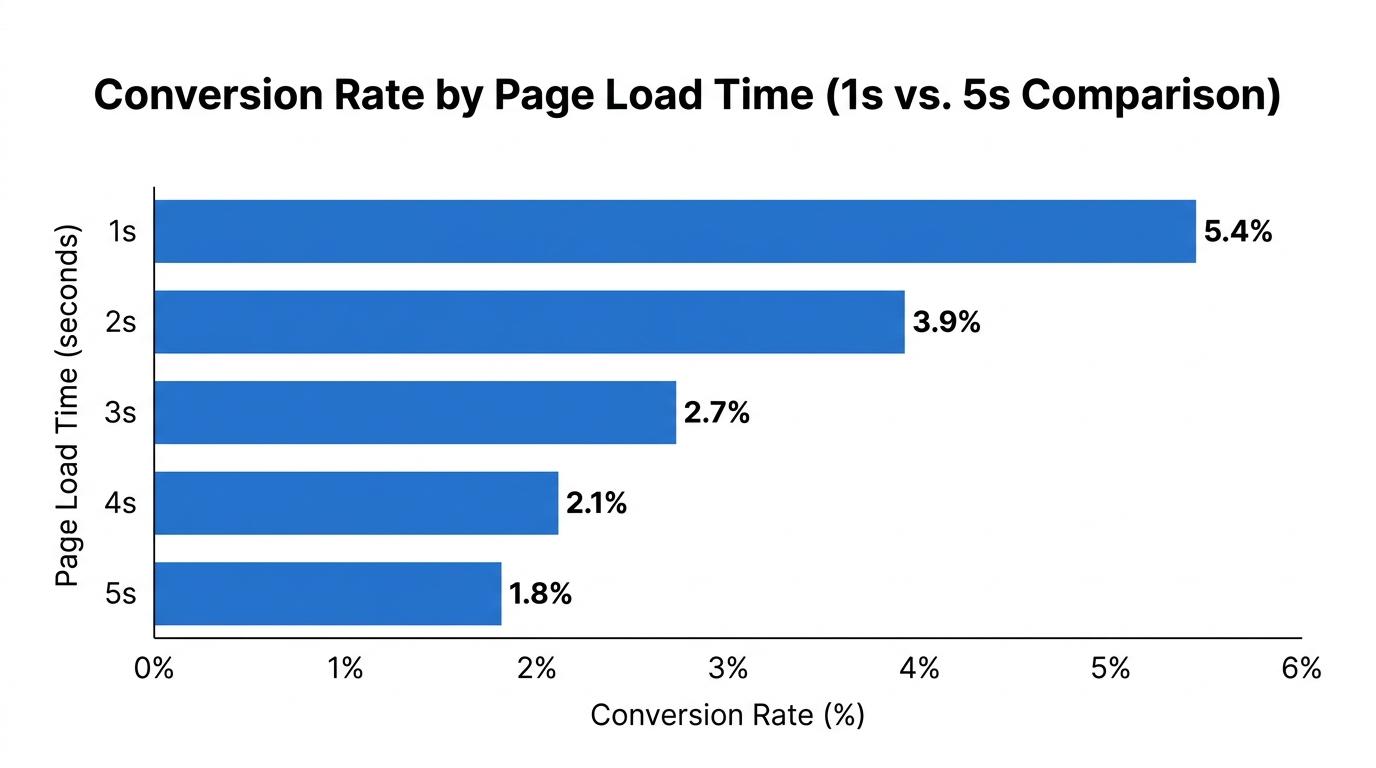

The relationship between load time and revenue is measurable. A B2B page loading in 1 second achieves a 3x higher conversion rate than one loading in 5 seconds. Every additional second of delay causes a compounding drop in user patience.

The same pattern appears in broader technical performance metrics. A widely cited Akamai study shows that a mere 100-millisecond delay in load time can reduce conversion rates by 7%. Google reports that websites meeting Core Web Vitals standards experience 24% lower abandonment rates and up to 30% higher conversions than sites failing these metrics. Speed is not just an SEO requirement; it is a fundamental conversion feature.

Protecting visual stability

Marketing teams love email capture pop-ups. Users hate them. Beyond the psychological friction, aggressive pop-ups often damage your Core Web Vitals by causing layout shifts.

When a delayed modal forces the main content downward just as the user attempts to click a product link, you create a rage-click scenario. Visual instability directly violates the Cumulative Layout Shift (CLS) metric. To maintain technical alignment, delay your pop-ups until the user demonstrates exit intent or scrolls past a significant depth threshold. Never let a secondary conversion goal interrupt the primary user experience.

Actionable optimization tactics for high-impact pages

To fix a leaky funnel, you have to find the exact moments where user motivation drops below the friction of the required task. Optimization sprints usually start at the absolute bottom of the funnel and strategically work up. Improvements closest to the final transaction—like cart layout or checkout fields—consistently yield the highest immediate revenue impact for the least amount of effort.

Streamlining the checkout experience

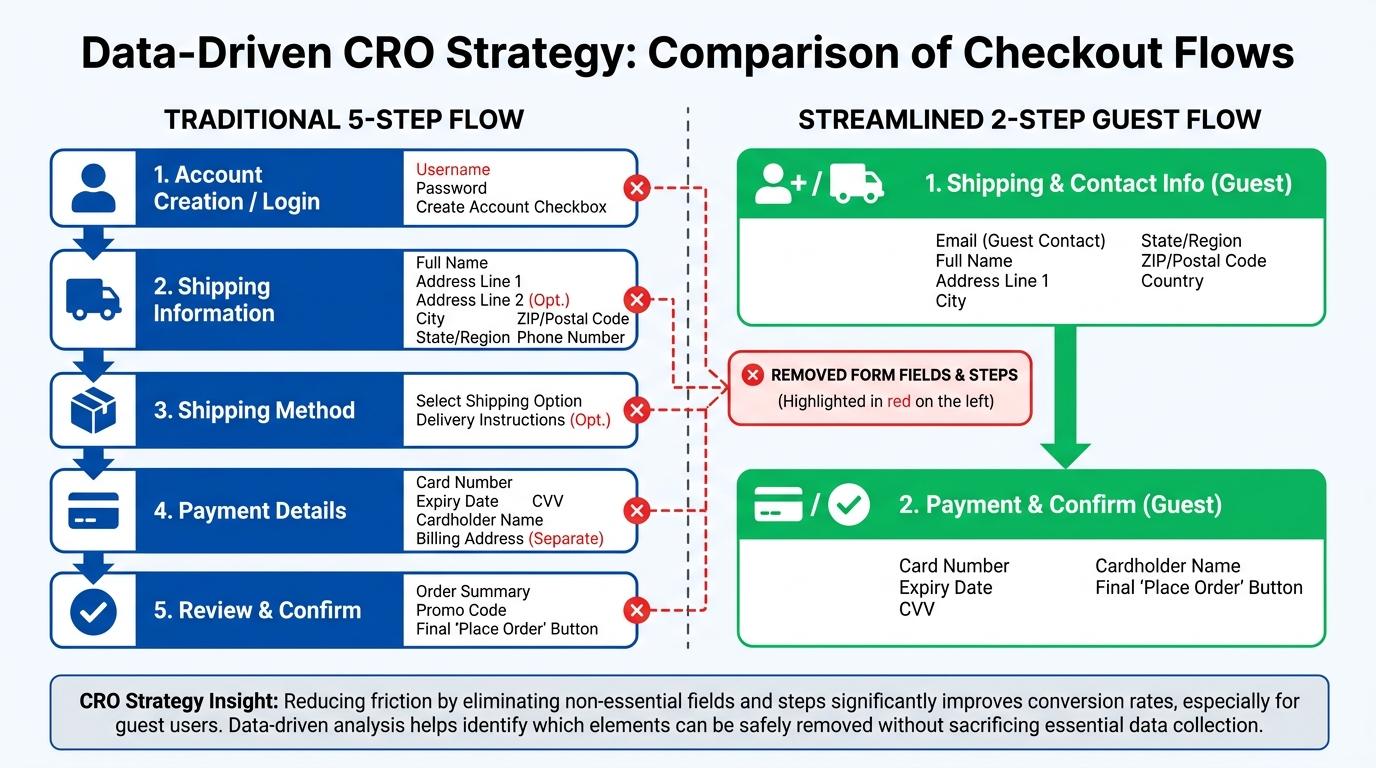

Analytics frequently show healthy add-to-cart metrics followed by a massive drop-off during the actual checkout phase for non-registered users. A cumbersome checkout process causes high abandonment right at the finish line.

Forcing users to create an account before payment causes drop-offs right at the finish line. The Baymard Institute found that 19% of online shoppers abandon their carts specifically because they are forced to create an account.

Remove the password creation field from your primary flow. Offer guest checkout as the default option. Once the transaction completes and the payment clears, you can invite the user to save their details for next time. Standardizing around highly optimized flows pays off. Shopify Checkout lifts conversions by up to 50% relative to standard guest checkouts by eliminating unnecessary form fields and trusting known device profiles.

Positioning above-the-fold value propositions

Place your most persuasive argument above the scroll line. Users form their primary impression of a page within the first few seconds of rendering.

Ecommerce product pages frequently bury the primary persuasive benefit—whether it's an unconditional lifetime warranty, free two-day shipping, or a proprietary unique material—in a dense paragraph far beneath the fold. Move your core value proposition up into the immediate visual hierarchy. Use a clear, descriptive headline paired with a tightly written bulleted list of three primary benefits placed directly next to the main product image.

Deploying social proof strategically

A generic 'Customer Reviews' page does nothing for the user hesitating on a pricing tier. Social proof only works when placed precisely at the point of highest friction.

If users abandon carts because of shipping costs, place a testimonial praising your fast delivery directly below the shipping calculator. If B2B buyers hesitate at a high-ticket software purchase, display logos of recognizable current clients immediately above the primary call-to-action. Trust signals function best as contextual tools, deployed specifically to counter the exact objection a user has at that exact moment in their journey.

Measuring statistical significance and analyzing test results

Split tests fail if you misinterpret the outcome. Dashboard numbers fluctuate wildly in the first few days of an experiment, tempting marketers to call a winner prematurely. Discipline at this stage separates rigorous optimization from arbitrary guessing.

The necessity of statistical significance

Don't peek at your results and stop a test just because the variant looks promising on day three. Wait for 95% statistical significance before declaring a winner.

Statistical significance proves that the difference in conversion rates between your control and your variant is the result of the changes you made, not random chance. Premature test conclusions frequently result in false positives. You implement the 'winning' design, push it live to 100% of your traffic, and watch your actual conversion rate drop because the test never reached mathematical validity.

Accounting for business cycles

Traffic quality changes depending on the day of the week. B2B traffic generally spikes on Tuesdays and dies on weekends. Ecommerce traffic might surge on Friday nights.

Always run your tests in increments of full seven-day weeks to account for these natural business cycles. If you run a test from Monday to Thursday, your data is skewed. A minimum testing duration of two full weeks ensures you capture a representative sample of all your buyer personas, regardless of when they prefer to shop.

Documenting the losing variants

Most of your hypotheses will fail.

The average win rate for A/B tests typically ranges between 12% and 20%. For example, an analysis of over 127,000 experiments by Optimizely found an average win rate of just 12%. That means roughly 80% to 88% of tests fail to produce a statistically significant positive lift.

Failure is a required component of the methodology. When a variant loses, document exactly what you tested and why you think it failed. A losing test tells you that your users don't care about the variable you changed. That insight prevents your team from wasting time debating similar changes in the future. Log every test — win, lose, or draw — into a central repository to ensure the entire marketing department learns from the outcome.

Frequently asked questions

What is considered a good conversion rate for my industry?

How long does it typically take for CRO to show results?

Can small businesses benefit from implementing CRO strategies?

How do you calculate the ROI of your CRO initiatives?

Building a culture of continuous optimization

Optimization isn't a project with a completion date. The most profitable companies treat it as a permanent operational function. A conversion rate optimization strategy replaces arbitrary site redesigns with continuous iteration.

Transitioning to an optimization mindset

Your first successful split test changes how you view website traffic. Low conversions stop looking like an unsolvable mystery and start looking like specific behavioral bottlenecks you can isolate and remove.

You shift from asking 'what should our new homepage look like?' to asking 'what specific user objection is our current homepage failing to answer?' That shift ends the internal political battles over design preferences. When every idea must survive the PIE prioritization framework and achieve 95% statistical significance, data completely replaces opinion.

Compounding institutional knowledge

The real value of an optimization program is the repository of customer psychology it generates over time.

Every split test you run directly teaches you how your unique audience actually behaves in the wild. You learn exactly which value propositions motivate them to click, which redundant form fields cause them to abandon the session, and which precise trust signals actually influence their final buying decisions. That institutional knowledge rapidly compounds over time. The behavioral insights you gain from a failed checkout test will eventually inform the targeted copy you write for your next paid search ad campaign.

Avoid paying high acquisition costs only to drop those users into an unoptimized funnel. Audit your raw behavioral data, map out your highest-impact friction points using a strict prioritization framework, and start methodically testing your hypotheses. The traffic is already visiting your website daily. You just need to clear the roadblocks so they can actually convert.

Pick topics that rank. Write content Google & LLMs love.

Research, outlining, and optimization in one place, in two clicks. Built for writers who care about speed and quality.